DESCRIPTIVE STATISTICS

DATA HANDLING AND ANALYSIS - PART TWO

DESCRIPTIVE DATA SCENARIO 1

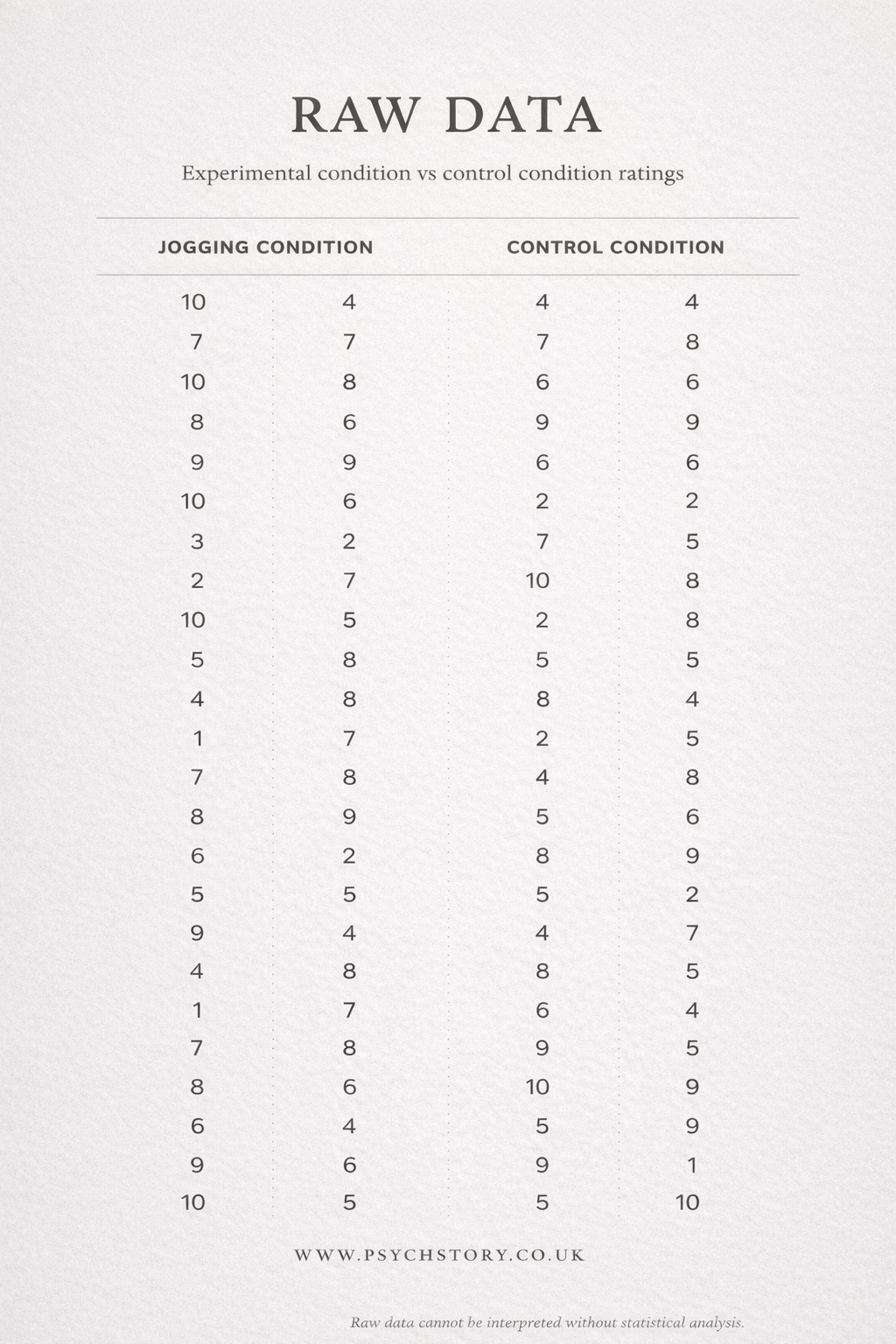

LOOK AT THE RAW TABLE 1: RAW DATA RATINGS OF THE OPPOSITE SEX FROM JOGGERS AND NON-JOGGERS

The raw table shows attractiveness ratings (on a scale from 1 to 10) that participants gave to photos of the opposite sex. Before making the ratings, participants were randomly allocated to one of two conditions:

Jogging condition: Participants jogged in place for 10 minutes.

Control condition: Participants did no exercise.

EYEBALL TEST ACTIVITY

Imagine you are the researcher. You have just collected all this data and now want to know the results.

Did the 10 minutes of jogging make participants rate the photos as more attractive?

Quickly scan the table (the “eyeball test”) and decide:

The jogging condition performed better.

The control (non-jogging) condition performed better.

It is impossible to tell which condition performed better

WHY THE EYEBALL TEST IS NOT ENOUGH

Most students choose option 3 – and they are right!

When you stare at more than 40 raw scores, your eyes quickly glaze over. The numbers jump around, some high, some low, and it is almost impossible to see any clear pattern. You cannot easily tell whether the joggers rated the photos higher overall or whether the two groups are actually very similar. This is exactly why researchers never rely on the eyeball test alone. Raw data is messy and overwhelming. Even though it contains all the information, it does not easily show us the overall story of what happened in the experiment.

That is where descriptive statistics come in.

INTRODUCTION TO DESCRIPTIVE STATISTICS After gathering raw data, researchers need a way to summarise it numerically so that patterns become visible. Descriptive statistics achieve exactly this. They transform a large set of individual scores into a clear and manageable summary, allowing us to see the main features of the data at a glance. Descriptive statistics work by calculating measures of central tendency, which show where the typical or average score lies, and measures of dispersion, which reveal how spread out the scores are around that centre. By organising the data in this way, researchers can move beyond the confusion of raw numbers and begin to understand the real story behind their experiment.

THE VALUE OF DESCRIPTIVE STATISTICS IN RESEARCH In any study, descriptive statistics play a vital role in determining whether the experiment has produced useful and interpretable findings. For example, by comparing mean ratings between the jogging and non-jogging conditions, a researcher can assess whether one group tended to give higher attractiveness ratings than the other. However, it is important to remember that descriptive statistics only describe what is in the data. They do not tell us whether any observed difference occurred by chance or whether the results are statistically significant. For that, researchers must turn to inferential statistics.

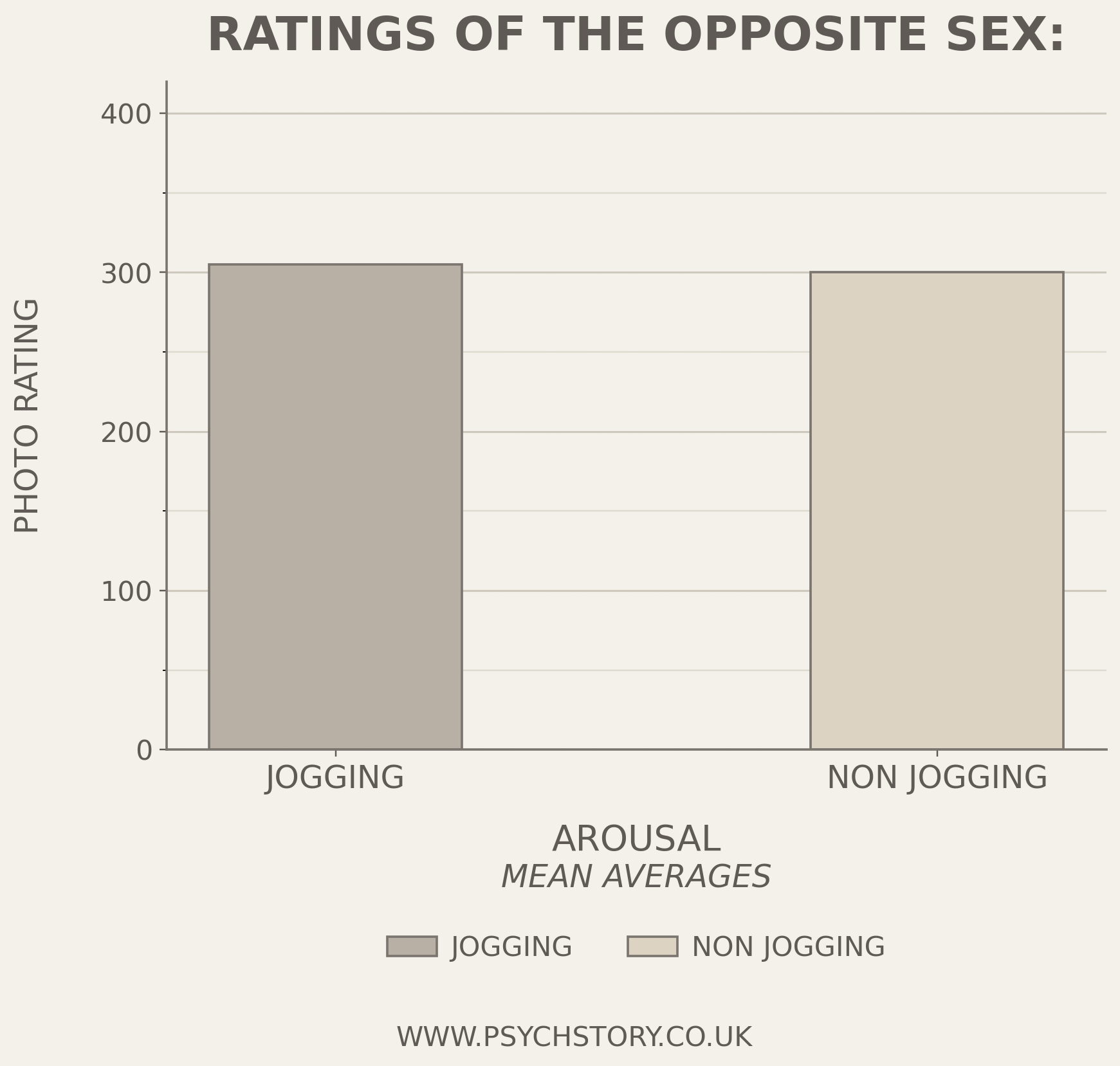

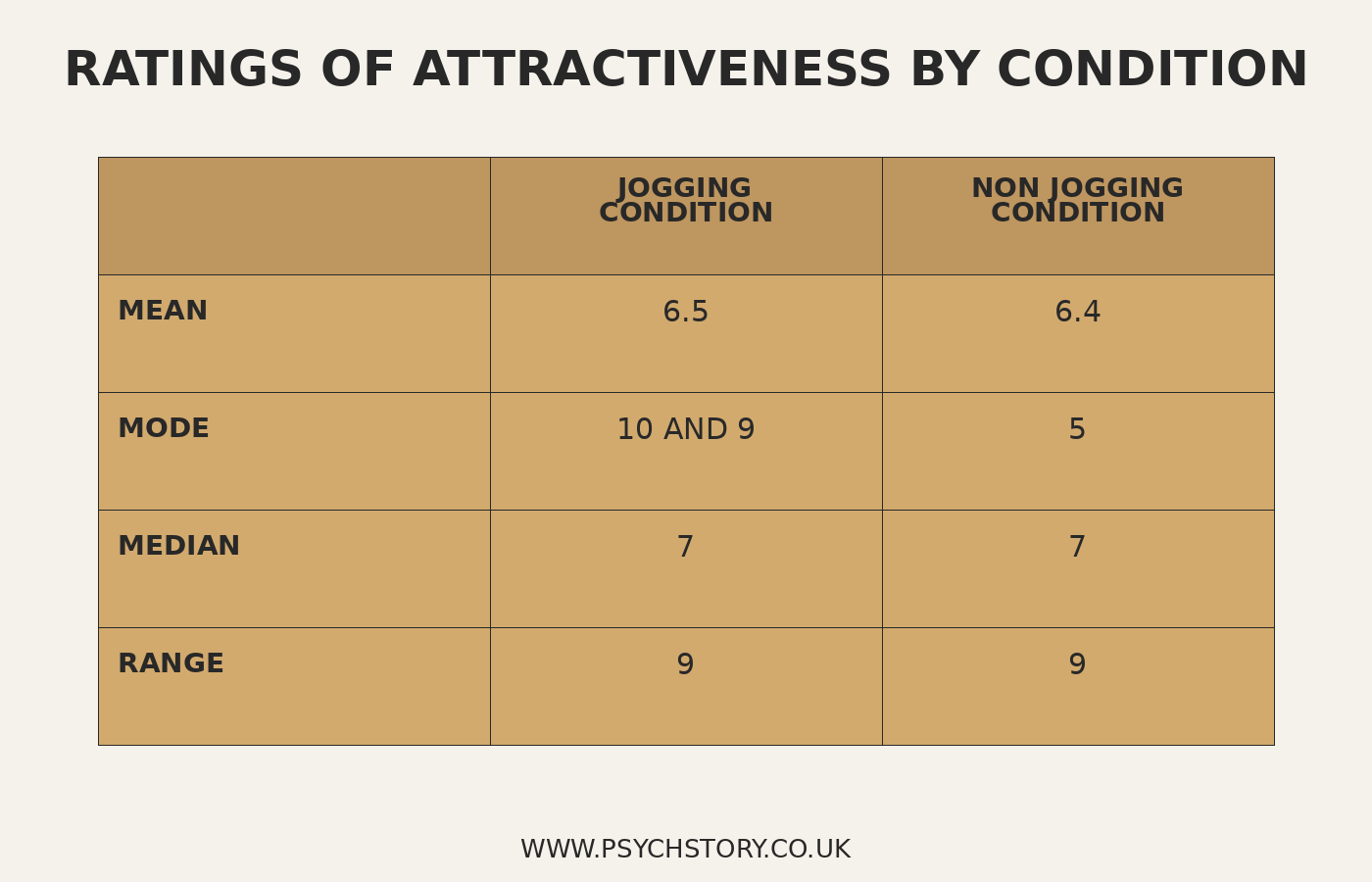

NOW LOOK AT THE GRAPH ONE AND TABLE TWO BELOW.

Once the measures of central tendency and dispersion have been calculated, researchers present them in clear and accessible formats. This allows both the researcher and others to interpret the results quickly and accurately. Graph One shows the mean attractiveness ratings for the two conditions, while Table Two displays several key descriptive statistics side by side

Q: Question: Given the information presented in Table 2 and Graph 1, is it appropriate to conclude whether the study has succeeded at this research stage?

The data from the experiment has been made interpretable. The primary function of descriptive statistics at this juncture is to help researchers determine the effectiveness of their study or experiment. Comparing the two conditions is no longer a matter of guesswork.

GRAPH ONE: RATINGS OF THE OPPOSITE SEX: JOGGERS V NON-JOGGERS

TABLE TWO: RATINGS OF THE OPPOSITE SEX: JOGGERS V NON-JOGGERS

DESCRIPTIVE STATISTICS: WHAT YOU NEED TO KNOW

SPECIFICATION:

Descriptive statistics: measures of central tendency – mean, median, mode; calculation of mean, median and mode; measures of dispersion; range and standard deviation; calculation of range; calculation of percentages.

Presentation and display of quantitative data: graphs, tables, scattergrams, bar charts.

Distributions: normal and skewed distributions; characteristics of normal and skewed distributions.

MEASURES OF CENTRAL TENDENCY

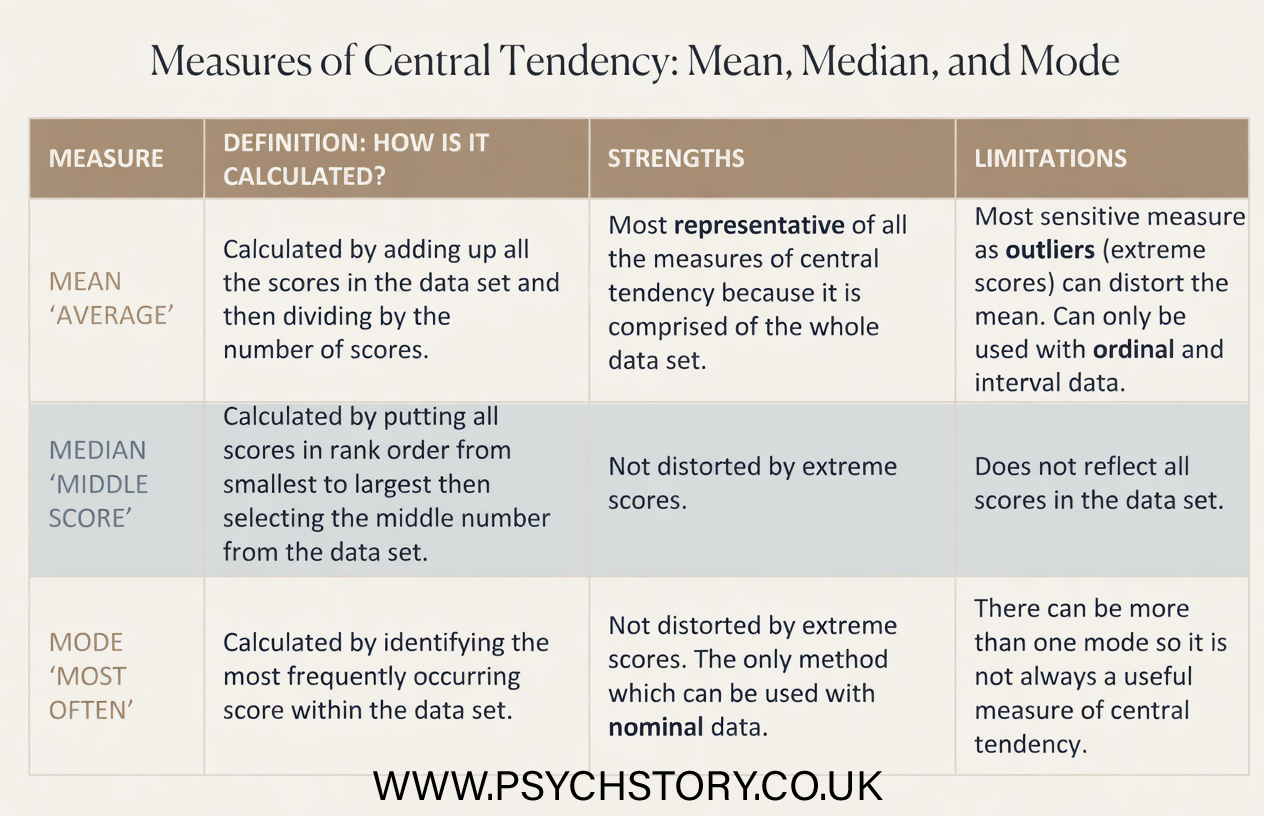

Questions such as “How many miles do I run each day?” or “How much time do I spend on my iPhone each day?” can be hard to answer because the results vary daily. It’s better to ask, “How many miles do I usually run?” or “On average, how much time do I spend on my iPhone ?”. In this section, we will look at three methods of measuring central tendency: the mean, the median and the mode. Each measure gives us a single value that might be considered typical. Each measure has its strengths and weaknesses. Measures of central tendency tell us about the most typical value in a data set and are calculated differently. Still, all are concerned with finding a ‘typical’ value from the middle of the data. For any given variable, each member of the population will have a particular value of that variable; for example, the number of positive ratings of photographs of the opposite sex. These values are called data. We can use measures of central tendency on the entire population to obtain a single value, or we can use them on a subset or sample to estimate the central tendency for the population.

OUTLINE, CALCULATE AND EVALUATE THE USE OF DIFFERENT MEASURES OF CENTRAL TENDENCY:

MEAN

MEDIAN

MODE

This could include saying which one you would use for some data, e.g. 2, 2, 3, 2, 3, 2, 3, 2, 97 - would you use mean or median here?

THE MEAN

Perhaps the most widely used measure of central tendency is the mean. The mean is what people are most referring to when they say ‘average’: it is the arithmetic average of a set of data. It is the most sensitive of all the measures of central tendency as it considers all values in the dataset. Whilst this is a strength, as it means all the data is being considered, the sensitivity of the mean must be weighed when deciding which measure of central tendency to use. It can misrepresent the data set if extreme scores are present.

The mean is calculated by adding all the data and dividing the sum by the number of values. The value given should lie somewhere between the dataset's maximum and minimum. If it isn’t, then the calculations have a human error!

For example, an apprentice sits five examinations and gets 94%, 80%, 89%, 88%, and 99%. To calculate their mean score, you would add all the scores together (94+80+89+88+99 = 450) and then divide by the number of scores there are (450÷5 = 90). This gives a mean score of 90%.

ADVANTAGES OF THE MEAN

Sensitive to Precise Data: The mean accounts for every value in the dataset, making it a highly sensitive measure that reflects the data's nuances.

Mathematically Manageable: It fits nicely into further statistical analyses and mathematical calculations, facilitating various statistical tests.

Widely Used and Understood: As a standard measure of central tendency, most people widely recognise and easily interpret the mean.

DISADVANTAGES OF THE MEAN

Affected by Extreme Values: The mean is sensitive to outliers, which can skew the results and provide a misleading picture of the dataset. Looking at the data set, a mean of 90% looks reasonable, as all scores are close to this value and are between the highest and lowest scores. However, if we now imagine that the apprentice received 17% instead of 94% on their first paper, this completely alters the mean, and the apprentice’s grade profile decreases drastically! (17+80+89+88+99 = 373 ÷ 5 = 75) This is a mean score of 75%, much lower than their other four scores; it is an anomaly. Anomalies bias the data.

Not Always Representative: In highly skewed distributions, the mean may not accurately reflect the data's central tendency.

Inapplicable to Nominal Data: The mean cannot be calculated for nominal or categorical data, limiting its applicability.

THE MEDIAN

In cases with extreme values in a data set, using the mean can be difficult to obtain a true representation of the data, so the median can be used instead. The median is not affected by extreme scores, so it is ideal for a heavily skewed data set. It is also easy to calculate, as the median takes the middle value within the data set.

Example: If there is an odd number of scores, then the median is the number which lies directly in the middle when you arrange the scores from lowest to highest. Using the previous data set as an example, five values would be placed in the following order: 12%, 67%, 71%, 72%, and 79%. Therefore, the median is the third value, which is 71%. Interestingly, the median score for this data set is 71%, yet the mean score was 60.2%. It is apparent from the data that the median is a more representative score, which is not distorted by the extreme score of 12%, unlike the mean.

If there is an even number of values within the data set, two values will fall directly in the middle. In this case, the midpoint between these two values is calculated. To do this, the two middle scores are added together and divided by two. This value will then be the median score.

Example: If the above data set included a sixth score, e.g., 12%, 34%, 67%, 71%, 72%, and 79%, then the median score would be 69% (67% + 71% ÷ 2).

ADVANTAGES OF THE MEDIAN

Resistant to Outliers: Unlike the mean, the median is not skewed by extreme values, making it a more robust measure in the presence of outliers.

Represents the Middle Value: It accurately reflects the central point of a distribution, especially in skewed datasets, by dividing the dataset into two equal halves.

Applicable to Ordinal Data: The median can be calculated for ordinal data (data that can be ranked) as well as interval and ratio data, offering wider applicability than the mean.

DISADVANTAGES OF THE MEDIAN

Less Sensitive to Data Changes: The median may not reflect small changes in the data, especially if these changes occur away from the middle of the dataset.

Not as Mathematically Handy: It doesn't lend itself as easily to further statistical analysis as the mean, due to its nonparametric nature.

Difficult to Handle in Open-Ended Distributions: Calculating the median can be challenging for distributions with open-ended intervals since the exact middle value may not be clear

THE MODE

The third measure of central tendency is the mode. This refers to the value or score that appears most frequently in the data set. While easy to calculate, it can be pretty misleading for the data set. Imagine if the lowest value in the example data set (12%) appeared twice. It wouldn’t represent the whole data set; however, this would be the mode score.

ADVANTAGES OF THE MODE

Versatile with Data Types: The mode is unique because it can be applied to categorical data, unlike the mean and median. For instance, in survey data on modes of transportation such as 'car', 'bus', or 'walk', the mode identifies the most frequently occurring response, offering valuable insights into the most common category.

DISADVANTAGES OF THE MODE

Potential for Multiple Modes: A dataset can have more than one mode, resulting in a bimodal situation with two modes or a multimodal situation with several modes. This can complicate the interpretation of the data.

Possibility of No Mode: If all data points are unique and occur only once, the dataset has no mode. This lack of a mode can limit the usefulness of the mode as a measure of central tendency in such datasets.

Exam Hint: When asked to calculate any measure of central tendency, show your calculations. Often, the question will be worth two or three marks, so it is important to show how you reached your final answer for maximum marks!

DESCRIPTIVE DATA SCENARIO 2:

DESCRIPTIVE DATA SCENARIO

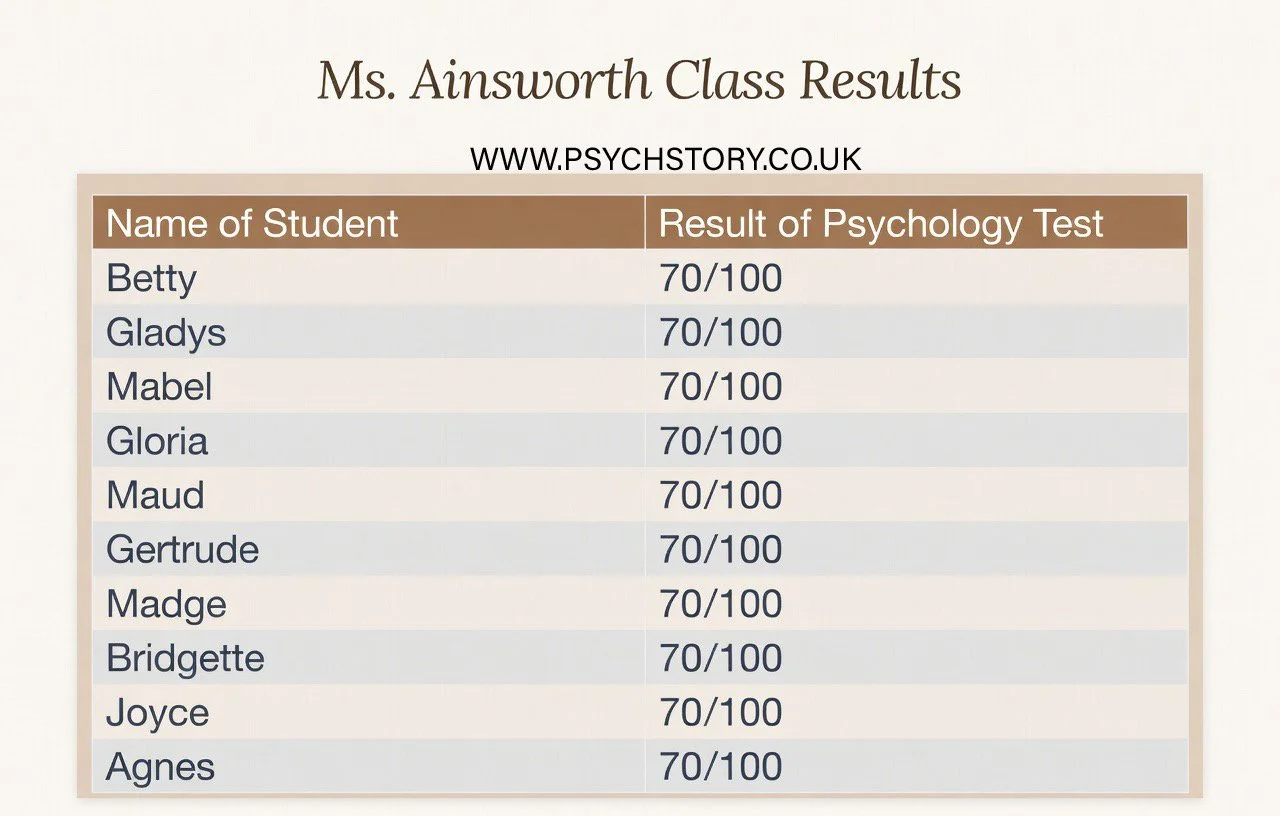

You are considering enrolling in an A-level Psychology course at a college. You have heard mixed reviews about the quality of teaching and want to make the best possible choice about which class to join. To help you decide, you request recent exam performance data from four different teachers.

The information you receive is limited:

Ms Ainsworth’s class

Mean average score: 70

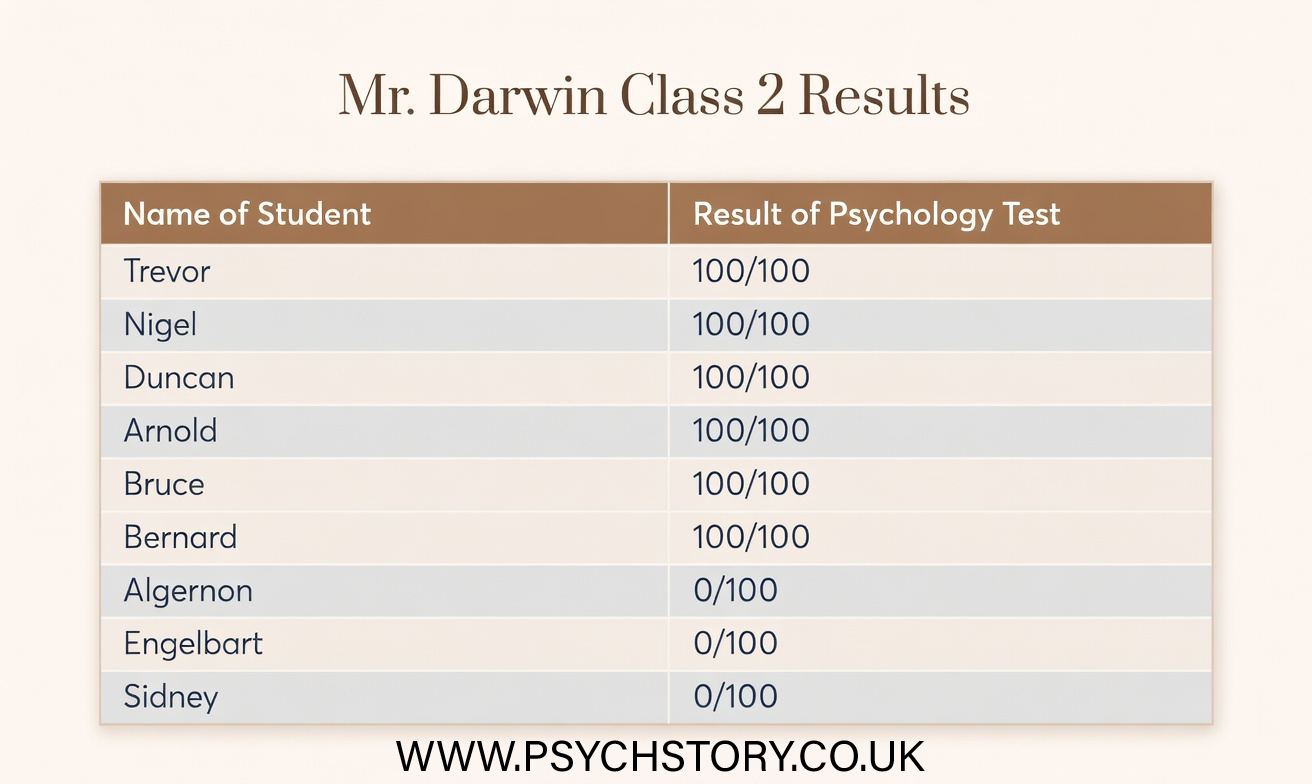

Mr Darwin’s class

Mean average score: 70

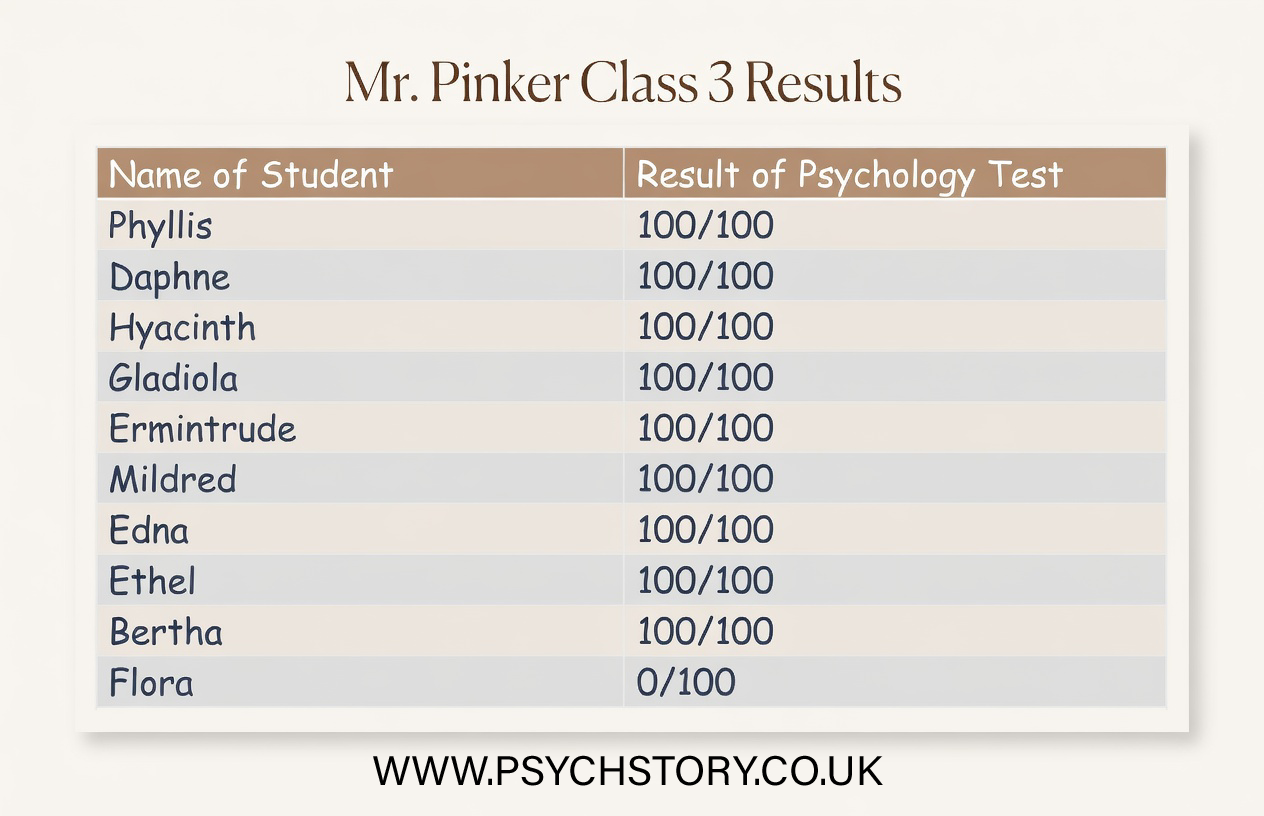

Mr Pinker’s class

Range: 101

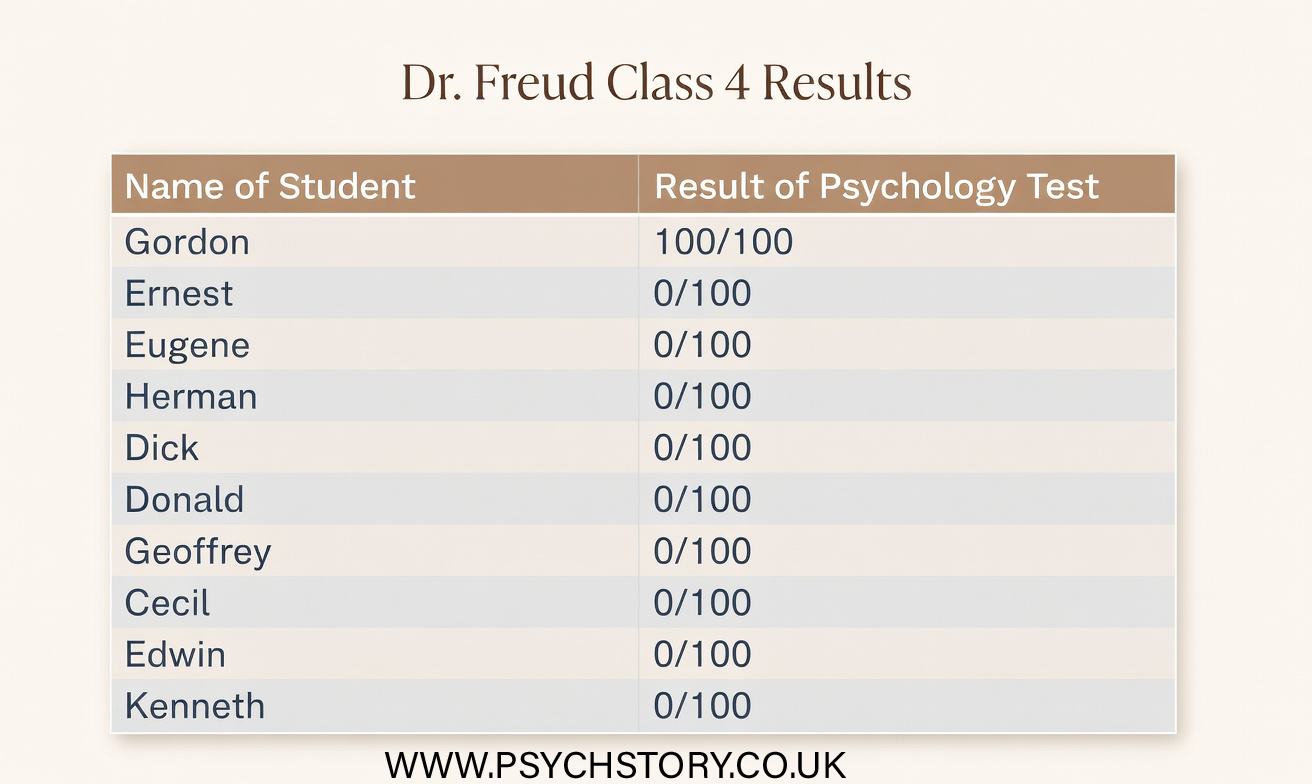

Dr Freud’s class

Range: 101

At this stage, you have not been given the raw scores or any other descriptive statistics.

QUESTION 1

Based only on the information above, which class would you choose, and why?

QUESTION 2

Having now seen the raw data, explain what the original statistics failed to show. In your answer, refer to at least two classes and explain why the first set of information was not enough to make a secure judgment.

QUESTION 3

If you could ask for one additional descriptive statistic for all four classes before making your choice, which would be most useful, and why?

OPTIONAL EXTENSION

Which class now appears to be the strongest, and what features of the data led you to that conclusion

CONCLUSION

The Ainsworth and Darwin data demonstrate that a mean of 70 can be highly misleading without additional information about the distribution of scores. In Ms Ainsworth’s class, most scores cluster closely around the mean, so it accurately reflects the typical student’s performance. In contrast, Mr Darwin’s class shows a wide spread of scores from very low to very high, with the mean of 70 arising only because extreme values balance each other out. The same measure of central tendency, therefore, supports very different interpretations depending on how the data are distributed.

The Pinker and Freud data reveal a parallel problem with the range. Both classes have an identical range of 101, yet the patterns are entirely different. In Mr Pinker’s class, most students achieve high scores, with one unusually low score artificially stretching the range. In Dr Freud’s class, most students achieve very low scores, with one unusually high score inflating the range. The range is driven solely by the two extreme values and tells us nothing about where most students are actually performing.

These scenarios highlight why relying on a single descriptive statistic is rarely enough to make a secure judgment. The original information (means for two classes and ranges for the other two) failed to reveal the shape and consistency of the distributions, making it impossible to reliably evaluate teaching quality. Raw scores alone are overwhelming and do not easily show patterns; descriptive statistics organise the data and make key features visible. However, no single statistic is sufficient on its own.

Measures of central tendency (mean, median, mode) indicate where the centre of the data lies, while measures of dispersion (range, interquartile range, standard deviation) show how spread out the scores are. In Ainsworth’s class, the mean works well because scores are clustered, but in Darwin’s class, the median would provide a better picture of typical performance as it is unaffected by extremes. Similarly, in Pinker’s and Freud’s classes, the interquartile range or standard deviation would better reveal the performance of the middle 50% of students or the average deviation from the mean, rather than being distorted by outliers.

Taken together, these statistics allow us to move beyond isolated numbers and properly interpret both the level and the consistency of performance. This is essential when evaluating real-world data, such as choosing a class or assessing whether research findings support a study’s aims. Descriptive statistics describe the data we have; they do not tell us whether results occurred by chance or allow causal conclusions.

Finally, it is crucial to select the appropriate statistic for the level of measurement and the purpose of the analysis. Choosing the right descriptive tools is not an optional extra but a fundamental part of accurate data interpretation. But more about that when you look at LEVELS OF MEASUREMENT

MEASURES OF DISPERSION

SPECIFICATION: OUTLINE, CALCULATE AND EVALUATE THE USE OF DIFFERENT MEASURES OF DISPERSION:

RANGE

INTERQUARTILE RANGE

NORMAL DITRIBUTIONS AND SKEWED DISTRIBUTIONS

STANDARD DEVIATION*

CALCULATE PERCENTAGES

*NB You are NOT required to calculate standard deviation; however, you must understand why it is used and what it shows.

INTRODUCTION TO MEASURES OF DISPERSION

Once researchers have identified the central or typical score using measures such as the mean or median, the next important step is to examine how spread out the individual scores are around that centre. This is the role of measures of dispersion. They provide essential information about data consistency. Two sets of scores can have very similar averages, yet one group may show clustered results tightly, while the other varies widely. Without measures of dispersion, important differences in the data can remain hidden.

There are three main measures of dispersion commonly used in psychology. Each offers a different level of detail about the spread of scores.

THE RANGE: The range is the simplest measure of dispersion. It is calculated by subtracting the lowest score in the data set from the highest score. In the jogging experiment, both the jogging and control conditions have a range of 9. This tells us that the total spread from the lowest to the highest rating is identical in both groups. However, the range has a significant limitation: it is based only on the two most extreme scores. A single unusually high or low result can therefore make the range appear much larger than it is for most participants.

CALCULATION OF THE RANGE: The range is calculated by subtracting the lowest score in the dataset from the highest score and (usually) adding 1. This addition of 1 is a mathematical correction to account for any rounding that may have occurred in the dataset's scores.

EVALUATION

The range is straightforward to calculate, offering a clear advantage in its simplicity.

It only considers the two extreme scores, the highest and the lowest, which may not accurately represent the dataset as a whole.

It's crucial to acknowledge that the range may be the same in datasets with a strong negative skew (e.g., most students scoring well on a psychology test) and those with a strong positive skew (e.g., most students scoring poorly on a psychology test), highlighting a limitation in its ability to distinguish between differently skewed datasets.

t

THE INTERQUARTILE RANGE

The interquartile range (IQR) addresses some of the weaknesses of the simple range. It focuses on the middle 50% of the ordered scores and ignores the most extreme values at both ends. In this experiment, the interquartile range is 3.00 for the jogging condition and 3.75 for the control condition. This suggests that the middle 50% of ratings in the jogging condition are slightly more tightly clustered than those in the control condition.

STANDARD DEVIATION (SEE FURTHER DOWN THE PAGE FOR AN EXPLANATION)

THE SHAPE OF THE DATA: WHY DISTRIBUTION MATTERS

Knowing the average score and how widely the scores are spread is important, but it is still not the full picture. Researchers also need to understand the overall shape of the data — that is, how the scores are distributed across the possible range of values. The shape reveals whether the scores cluster symmetrically around the mean or whether they pile up more towards one end, leaving a long tail on the other side.

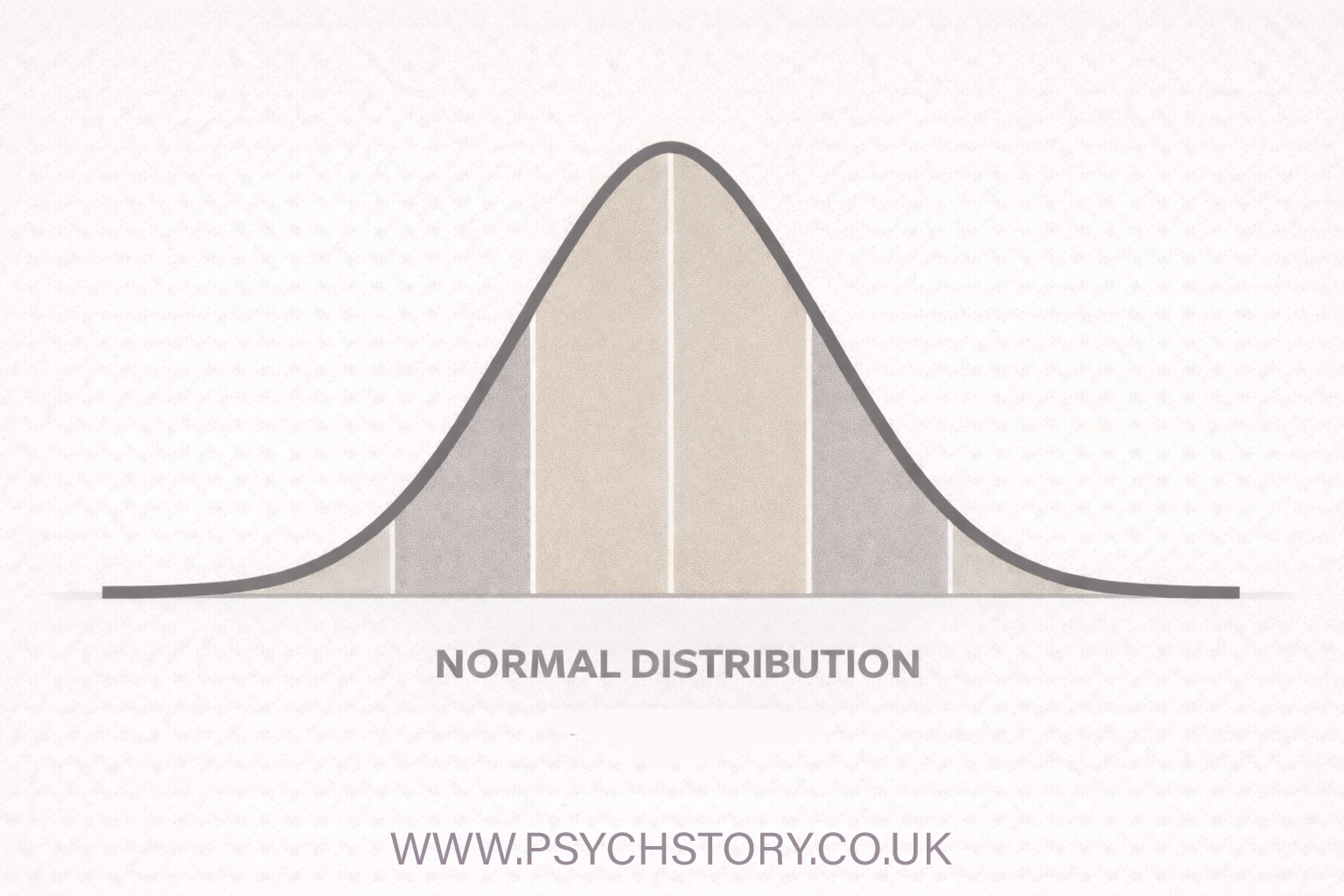

This is where the concept of the normal distribution becomes central to psychological research. When data follow a normal distribution, they form a symmetrical, bell-shaped curve (often simply called a bell curve). In a perfect normal distribution, the mean, median and mode all coincide at the centre, and the scores taper off equally on both sides. Most scores fall close to the mean, with fewer and fewer appearing as we move further away in either direction.

The normal distribution is not just one possible pattern — it is extremely important in psychology because many human characteristics and behaviours (such as height, intelligence scores, reaction times, and, under certain conditions, psychological test results) tend to follow this bell-shaped distribution when large numbers of people are measured. Understanding whether our data approximate a normal distribution or are skewed (asymmetrical) helps us interpret both the central tendency and dispersion more accurately. It also determines which further statistical techniques are appropriate.

In short, the shape of the distribution ties together everything we have looked at so far. The mean tells us the centre, the standard deviation tells us the average spread, and the shape of the curve shows how that spread is organised. When the data follow a normal distribution, the mean and standard deviation together provide a particularly powerful and predictable description of the entire data set.

PROPERTIES OF A NORMAL DISTRIBUTION?

THE NORMAL DISTRIBUTION So far, we have looked at measures of central tendency, which tell us where the typical score lies, and measures of dispersion, which tell us how spread out the scores are. However, there is still one more important piece of the picture: the overall shape of the data. The shape shows us how the scores are actually arranged across the full range of possible values.

WHAT IS A NORMAL DISTRIBUTION? A normal distribution curve, often called a bell curve due to its bell-like shape, is a graphical representation of a statistical distribution where most observations cluster around a central peak — the mean — and taper off symmetrically towards both tails. This curve is perfectly symmetrical because the mean, median, and mode are all exactly the same value and are located at the centre of the distribution. It is this coincidence of the three measures of central tendency that creates the balanced, mirror-image shape on both sides of the mean.

WHY IS IT CALLED “NORMAL”? The term “normal” can sound confusing at first. It does not mean this is the only correct way for data to behave. The name comes from both historical and practical reasons: the normal distribution appears very frequently in real life, and it has properties that make it especially useful in statistics.

IT APPEARS FREQUENTLY IN REAL LIFE. If we measure large numbers of people across many human characteristics, the data often follow a normal distribution. Height, blood pressure, reaction times, and many psychological test scores are good examples. In each case, most people’s scores cluster around the average value, with fewer and fewer people scoring extremely high or extremely low. A simple everyday example is daily calorie intake. Most people consume a moderate number of calories each day. Very few survive on almost nothing (for example, just one bowl of cornflakes), and very few consume an enormous amount (for example, ten steaks with chips every day). When we plot this kind of data, it forms the familiar symmetrical bell shape. This natural clustering around the mean is why the distribution is described as “normal”.

THE CENTRAL LIMIT THEOREM. There is also a deeper statistical reason why the normal distribution appears so often. The central limit theorem states that when we take a large number of random measurements or influences and add them together, the overall result tends to form a normal distribution, even if the individual pieces did not start out that way. This helps explain why so many variables in psychology appear bell-shaped when studied in large samples.

USEFUL MATHEMATICAL PROPERTIES

The normal distribution has one major practical advantage: once we know only the mean and standard deviation, we can describe and predict much about the entire data set. These clear mathematical properties make later stages of analysis, including hypothesis testing and inferential statistics, much more straightforward.

WHAT DOES THE CURVE ACTUALLY LOOK LIKE? When data follow a normal distribution, plotting them on a graph yields a smooth, symmetrical bell-shaped curve. The highest point of the curve is at the mean. As we move away from the mean in either direction, the number of scores gradually decreases, creating two balanced tapering tails. Most observations lie close to the average, and only a small proportion appear at the extreme ends

These characteristics make the normal distribution a fundamental statistical concept, underpinning many theoretical and practical applications in various fields.

EXAMPLES OF A NORMAL DISTRIBUTION?

Examples of phenomena that typically exhibit a normal distribution include:

Height of the Population: The height distribution in a given population usually follows a normal curve, with most individuals having an average height. The numbers gradually decrease for those who are significantly taller or shorter than average, due to a mix of genetic and environmental factors.

IQ Scores: The distribution of IQ scores across a population also tends to follow a normal distribution. Most individuals' IQs fall within the average range, while fewer people have very high or very low IQs, reflecting various genetic and environmental influences.

Income Distribution: Although not perfectly normal due to various socioeconomic factors, income distribution within a country often resembles a normal curve, with a larger middle class and fewer individuals at the extremes of wealth and poverty.

Shoe Size: The distribution of shoe sizes, particularly within specific gender groups, tends to be normally distributed. This is because the physical attributes that determine shoe size are relatively similar across most of the population, with fewer individuals requiring very large or very small sizes.

Birth Weight: The weights of newborns typically follow a normal distribution, with most babies born within a healthy weight range and fewer babies being significantly underweight or overweight at birth.

These examples illustrate how the normal distribution naturally arises in various contexts, providing a useful model for understanding and analysing data in fields ranging from biology and psychology to economics and social sciences.

NOT NORMAL DISTRIBUTIONS

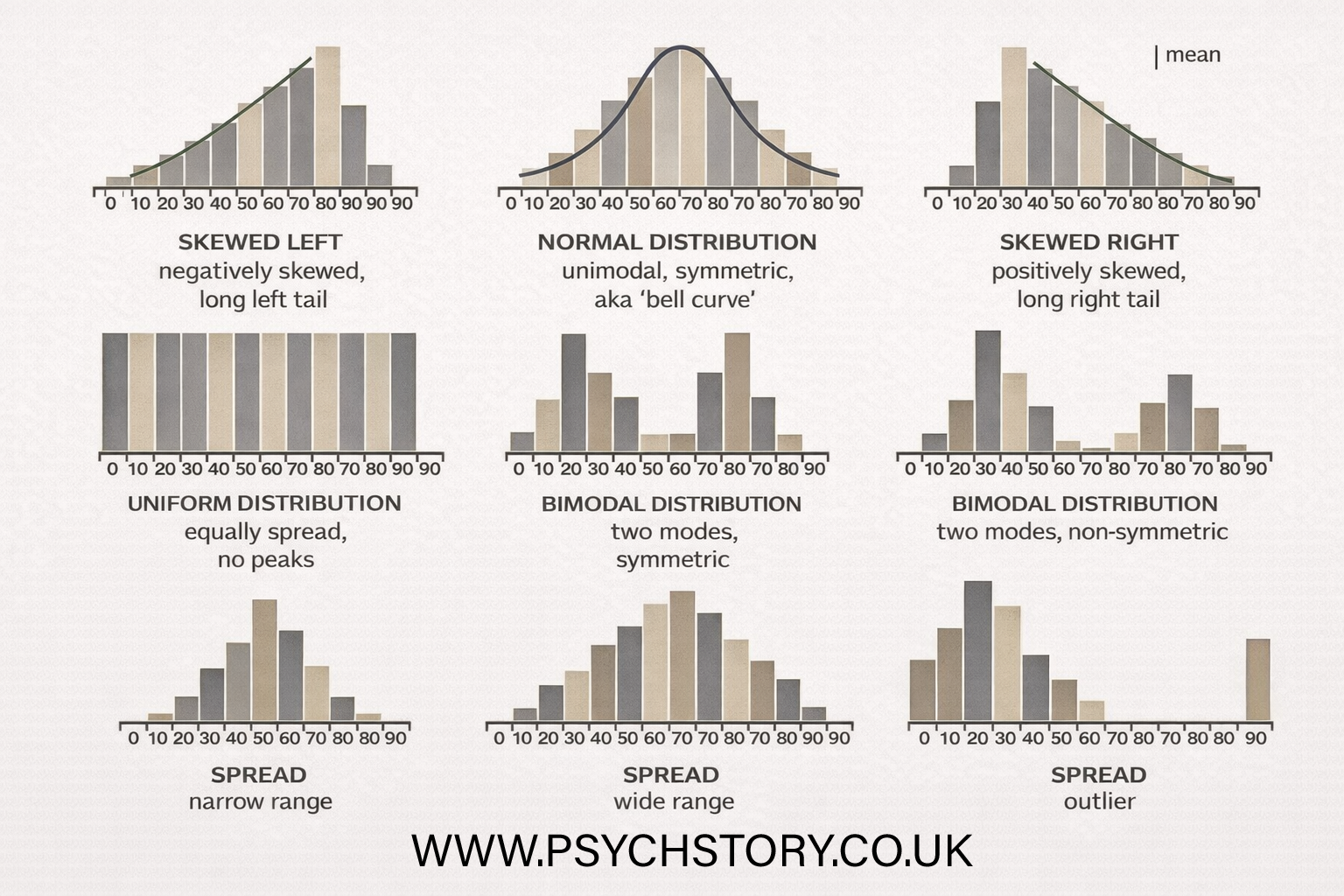

NOT EVERY DISTRIBUTION IS NORMAL

Not all data sets produce a perfect symmetrical bell curve. Some curves are flatter or sharper than others, and sometimes the peak is shifted slightly to the left or right. While many data sets come close to a normal distribution, others are clearly skewed. Skewed distributions are stretched or pulled to one side, creating a long tail on either the left or the right. Understanding these different shapes is important because they affect how we interpret the mean, median, and mode.

WHAT IS SKEWNESS?

Skewness occurs when the scores are not evenly distributed around the mean. Instead of being symmetrical, most of the data clusters on one side, with a long tail extending to the other. There are two main types of skew:

Results (data) can be "distributed" (spread out) in different ways.

RIGHT SKEWED OR POSITIVE DISTRIBUTION.

A positive skew is when the long tail is on the positive side of the peak, and the mean is to the right of the peak value. Some people say it is "skewed to the right".A positively skewed distribution has a mean, median, and mode that are positive rather than negative or zero; i.e., the data are more concentrated on one side of the scale, with a long tail on the right. It is also known as a right-skewed distribution, where the mean is generally to the right of the data's median. Positive (right-skewed) distributions occur when the data's tail is longer towards the right-hand side of the distribution. This usually means that a few high values stretch the graph to the right, while most of the data cluster towards the lower end. Here are examples of positive distributions and explanations for why they exhibit right-skewness:.

POSITIVE SKEW (RIGHT-SKEWED) The long tail is on the right side of the peak. Most of the scores are bunched up on the lower end, but a small number of unusually high scores stretch the tail out to the right. This pulls the mean to the right of the median and mode.

NEGATIVE SKEW (LEFT-SKEWED) The long tail is on the left side of the peak. Most of the scores are bunched up on the higher end, but a small number of unusually low scores stretch the tail out to the left. This pulls the mean to the left of the median and mode.

WHY DO THE MEAN, MEDIAN AND MODE DIFFER IN SKEWED DISTRIBUTIONS?

In a normal distribution, the mean, median, and mode all sit at exactly the same central point.

In a skewed distribution, however, the extreme scores in the long tail pull the three measures apart.

Here’s why they end up in different positions:

The mode is the most common score. It stays at the highest point on the graph — where most of the scores are clustered.

The median is the middle value when all the scores are put in order from lowest to highest. It is not strongly affected by extreme scores.

The mean (the average) is different. Because it is calculated using every single score, it tends to be pulled towards the extreme values in the tail.

In a positively skewed (right-skewed) distribution, most scores are bunched up on the lower side, with a few very high scores creating a long tail on the right.

Because those few extremely high scores pull the mean upwards, the order is usually: Mode < Median < Mean. The mean is dragged to the right by the outliers, while the mode stays where the majority of the scores are, and the median sits somewhere in between. This difference is one of the most important things to understand about skewed data — the mean can be misleading if there are extreme scores, which is why we often look at the median as well in skewed distributions

REAL-LIFE EXAMPLES OF POSITIVE (RIGHT-SKEWED) DISTRIBUTIONS

Here are some clear examples that show why positive skew is so common:

Individual incomes: Most people earn between £20,000 and £50,000, but a small number of very high earners (e.g. millionaires and CEOs) create a long tail stretching to the right. The mean income is pulled upwards by these extremely high values.

Scores on a difficult exam: Most students score low to moderate marks because the test is hard. However, a few very able students achieve very high marks. This creates a long tail on the right and raises the mean score above the median.

Number of pets per household: Most households have 0 or 1 pet. A small number of households have 5, 7 or even more pets. These few large numbers create the long right tail.

House prices: Most houses are moderately priced, but a small number of luxury mansions or properties in prime locations are extremely expensive. These high-value outliers stretch the tail to the right and increase the mean house price.

Reaction times in psychology experiments: Most participants respond fairly quickly, but a few people have much slower reaction times (due to distraction, tiredness, etc.). The slow outliers create a positive skew.

These examples all show the same pattern: the majority of the data clusters on the lower side, while a few extreme high values create the long tail on the right. This is what positive (right) skew looks like in real life

LEFT SKEWED OR NEGATIVE DISTRIBUTION

SLOPED TO THE LEFT: Why is it called negative skew? Because the long "tail" is on the negative side of the peak, and the mean is on the left of the peak. Some people say it is "skewed to the left" (the long tail is on the left-hand side). A negatively skewed distribution has a mean, median, and mode that are negative rather than positive. i.e., the data distribution is skewed to one side of the scale, with a long tail on the left. It is also known as a left-skewed distribution, where the mean is generally to the left of the data's median.

EXAMPLES OF NEGATIVE DISTRIBUTIONS AND EXPLANATIONS

In a negatively skewed distribution, most scores or values cluster towards the higher end, while a few unusually low scores create a long tail extending to the left. This pulls the mean to the left of the median and mode.

Here are some clear real-life examples:

Scores on an easy exam. Most students score very highly (e.g., A or A*), but a small number score much lower. The few low scores create the long tail on the left, pulling the mean down.

Age at retirement: Most people retire around 65–68 years old. However, a few people retire very early (e.g. at 50 or 55 due to illness or wealth). These early retirements create the long left tail, making the mean retirement age lower than the most common retirement age.

Olympic long jump distances. Most elite athletes jump between 7.5 and 8.5 metres. However, a few athletes have much shorter jumps (e.g. 5–6 metres due to injury or poor performance). These low outliers create a left-tail.

Time to complete a simple task. Most people take a similar amount of time to finish the task, but a small number take a very long time (due to mistakes or difficulty). The slow outliers pull the tail to the left.

Lifespan of consumer products: Most products last as long as expected or longer, but a few fail very early. These early failures create the long left tail.

Age of death in a population. Most people live into their 70s, 80s or 90s. However, a small number die very young (due to illness or accidents). These early deaths create a tail on the left, pulling the mean age of death downwards.

In all these examples, most of the data is bunched on the higher side, while a few unusually low values extend the tail to the left. This is what gives a negative (left-skewed) distribution its characteristic shape.

NO DISTRIBUTION: Sometimes the data do not follow any clear, known pattern, such as a normal or skewed distribution. In this case we say there is no identifiable distribution or the distribution is unknown. The scores are scattered in a way that does not form a recognisable shape on a graph, making it difficult to predict how the data are organised or to apply standard statistical rules confidently

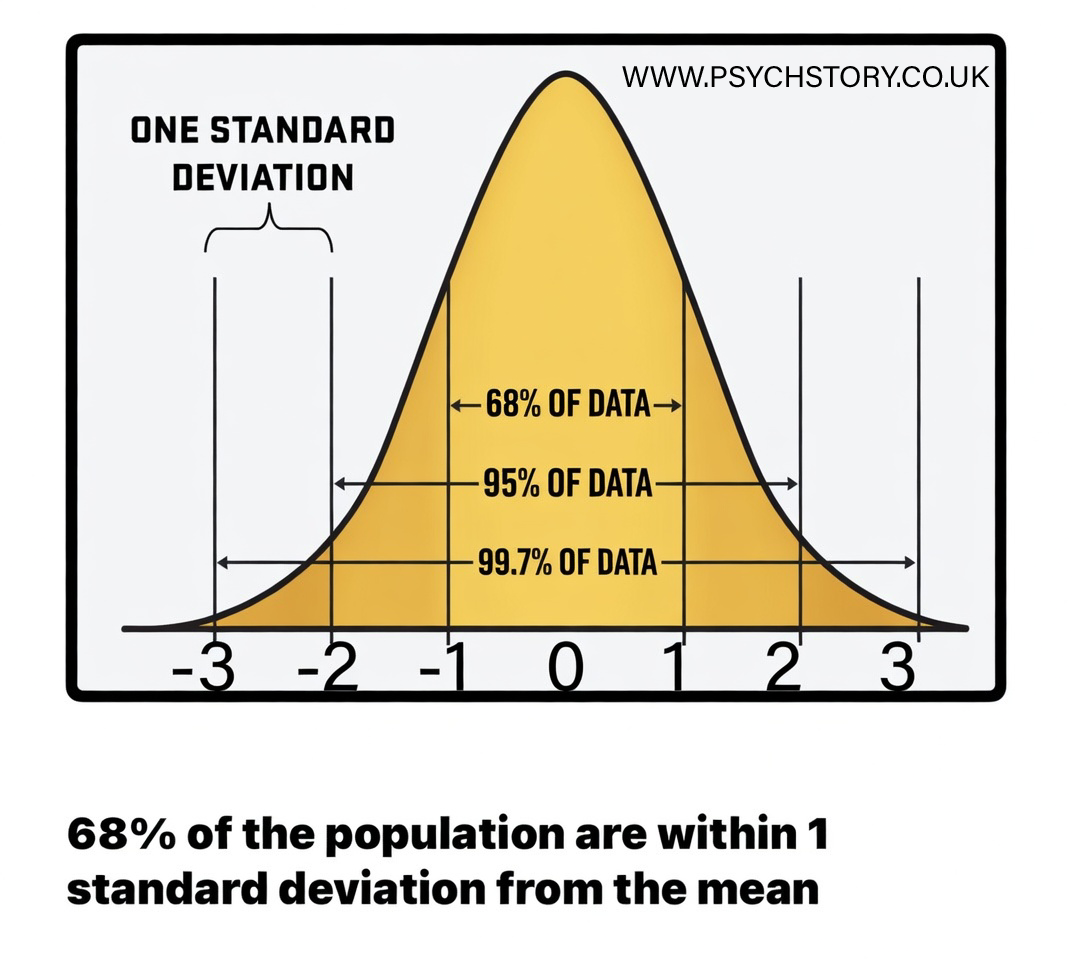

THE STANDARD DEVIATION IN DETAIL

I'll be honest. Standard deviation is a more complex concept than the other measures of central tendency and dispersion we've covered so far. It is more intricate than the range, but it is also far more insightful for truly understanding data. Many regard standard deviation as the pinnacle of descriptive statistics. It is sometimes called the “mean of the deviations” because it tells us the average distance of each score from the mean. Without it, the full story told by the data often remains incomplete. Standard deviation is a crucial measure in statistics and probability. It captures the extent of variability — how much the scores differ from the average (the mean). In simple terms, it quantifies the spread of the results.

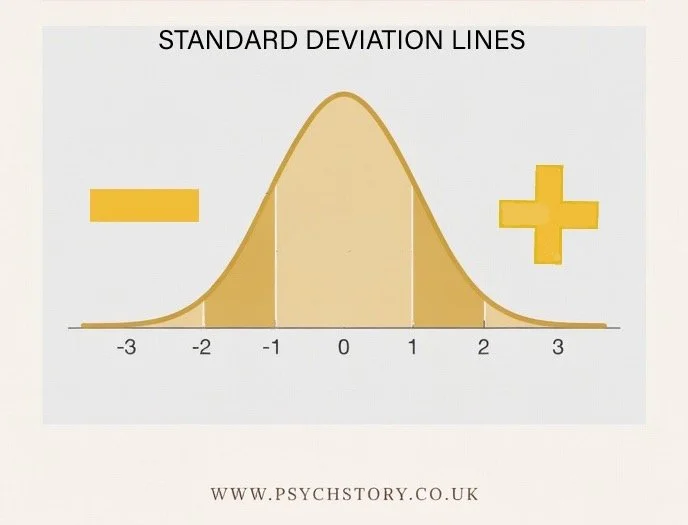

STANDARD DEVIATION LINES

On a normal distribution (bell curve), we usually mark three standard deviation lines on each side of the mean.

These lines serve two important purposes:

HOW FAR FROM THE NORM? They act as clear markers for how unusual or extreme a score is. For example, eating 12 meals per day would be far outside the normal range for most people, so you would not expect to find that result clustered around the mean. It would sit way out in the tail of the distribution.

WHAT PERCENTAGE OF PEOPLE FALL AT EACH LEVEL? Unlike the range, which only tells us the difference between the highest and lowest score, standard deviation lines tell us the percentage of scores that fall within certain distances from the mean.

For example, if the results in a class range from 0 to 100, the range alone cannot tell us whether one person scored 100 and everyone else scored low, or whether nearly all students scored highly. With standard deviation, we can see exactly how the scores are spread out around the mean

THE THREE STANDARD DEVIATION LINES

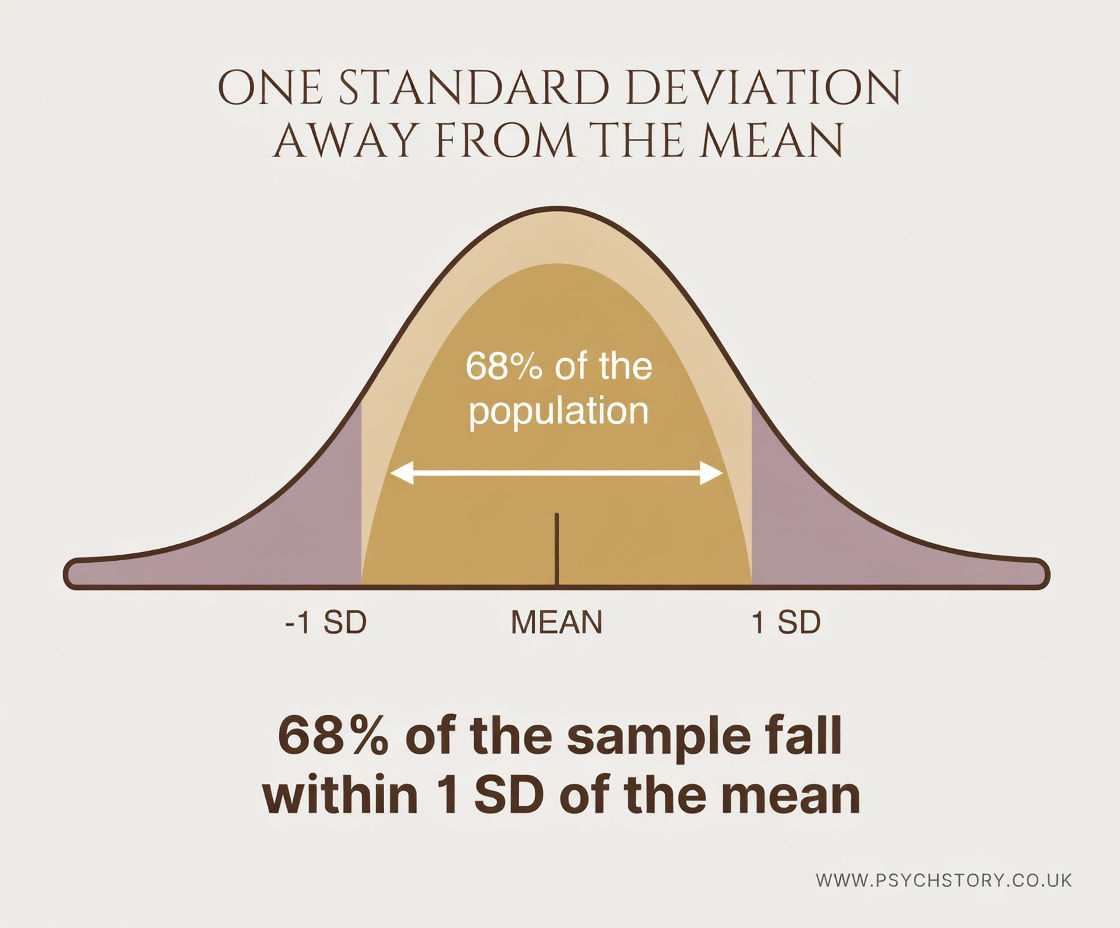

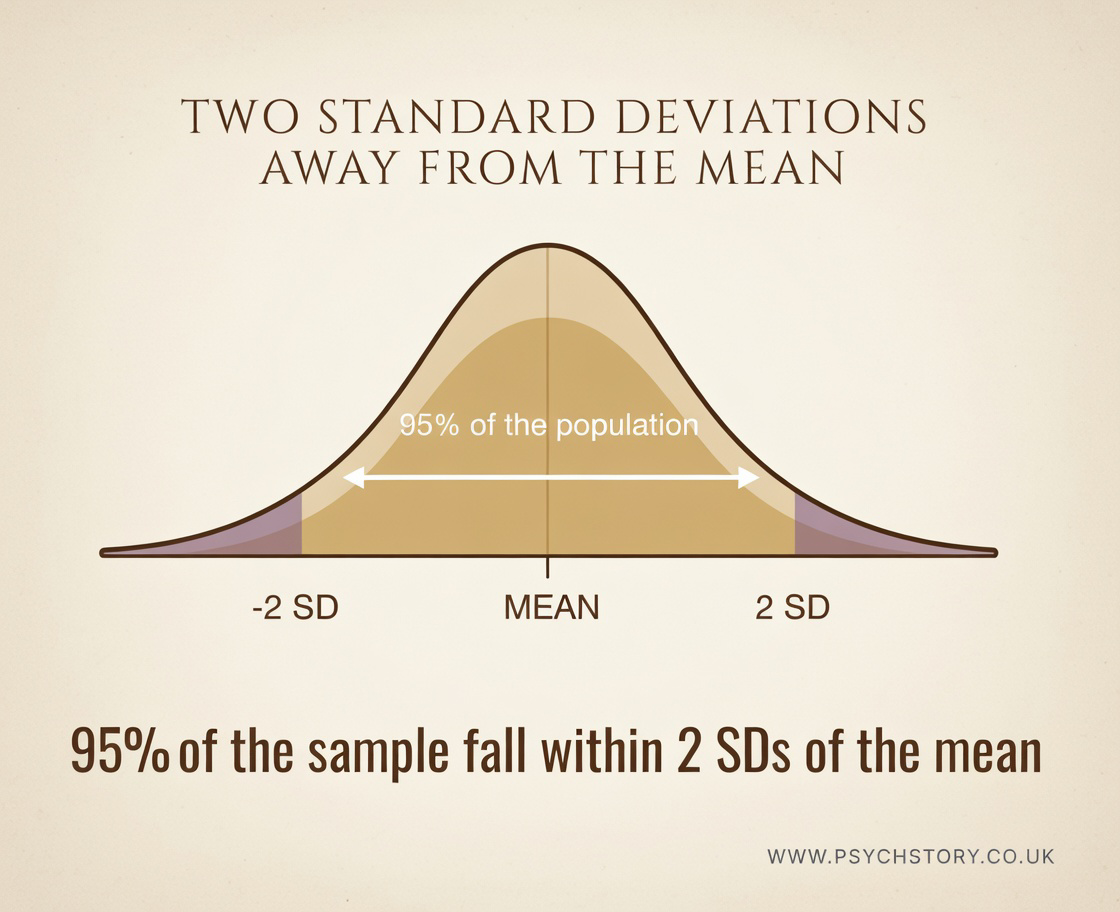

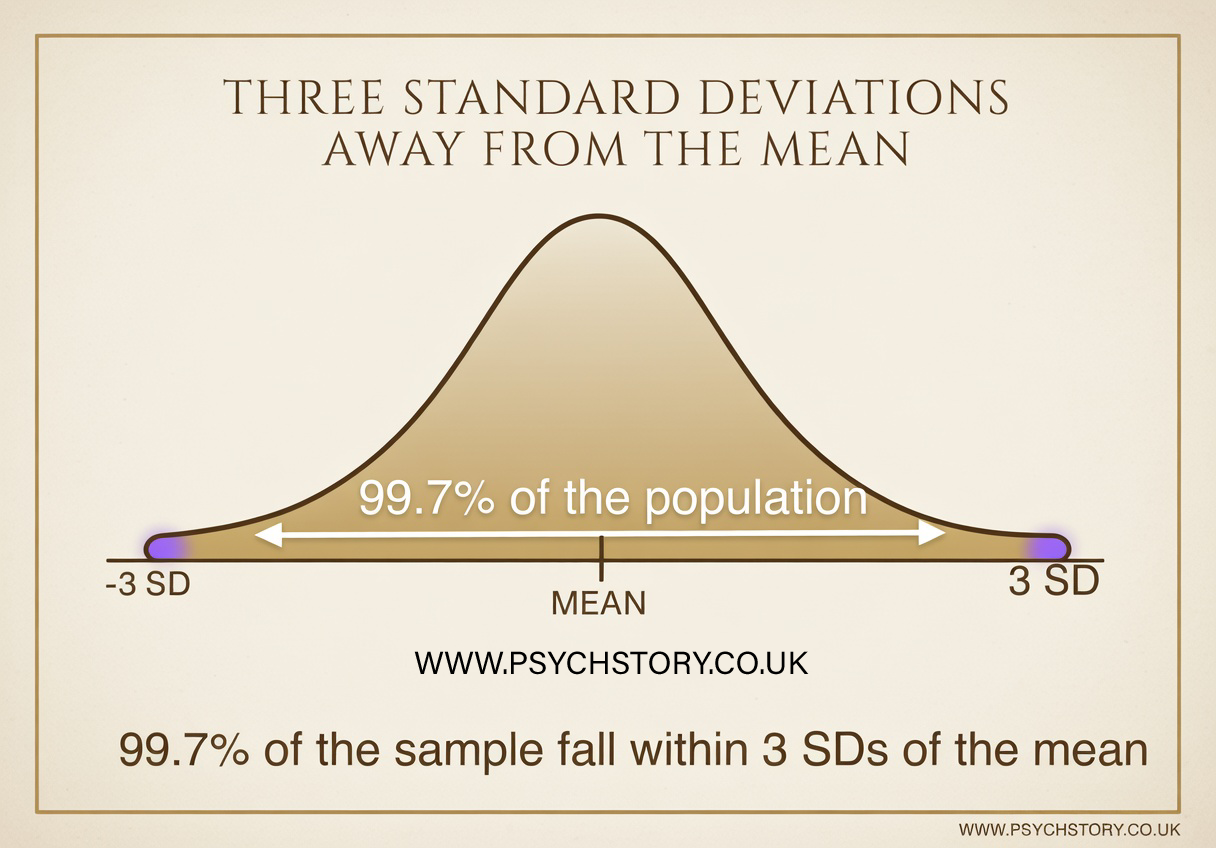

When data follows a normal distribution, the standard deviation lines on the bell curve tell us exactly what percentage of the scores fall within certain distances from the mean. These percentages are very consistent:

±1 standard deviation from the mean contains approximately 68.2% of the population/scores

±2 standard deviations from the mean contains approximately 95.4% of the population/scores

±3 standard deviations from the mean contains approximately 99.7% of the population/scores

Footnote: These figures are often remembered in a simplified, rounded form as 68%, 95%, and roughly 99% for the third line when working quickly in exams

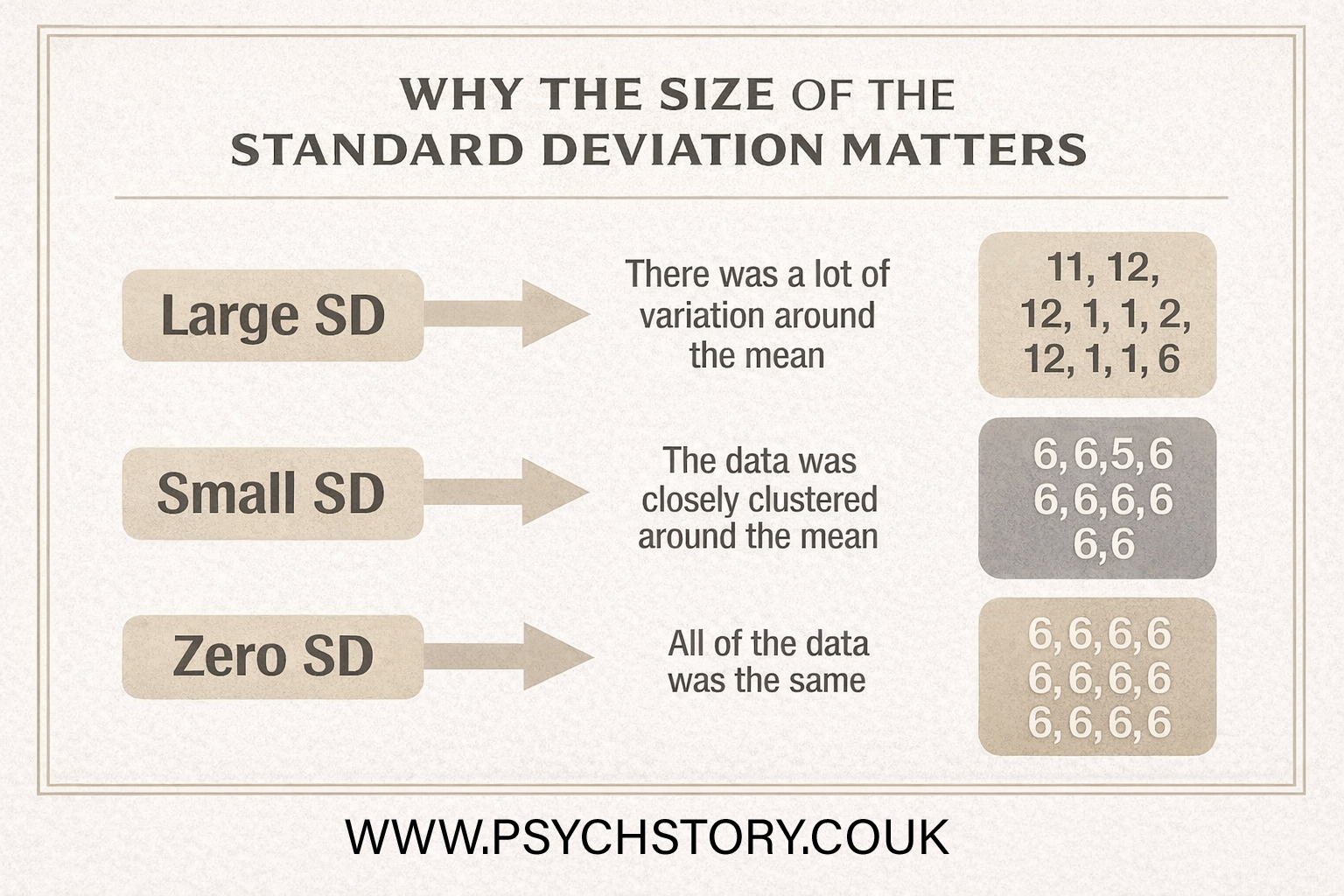

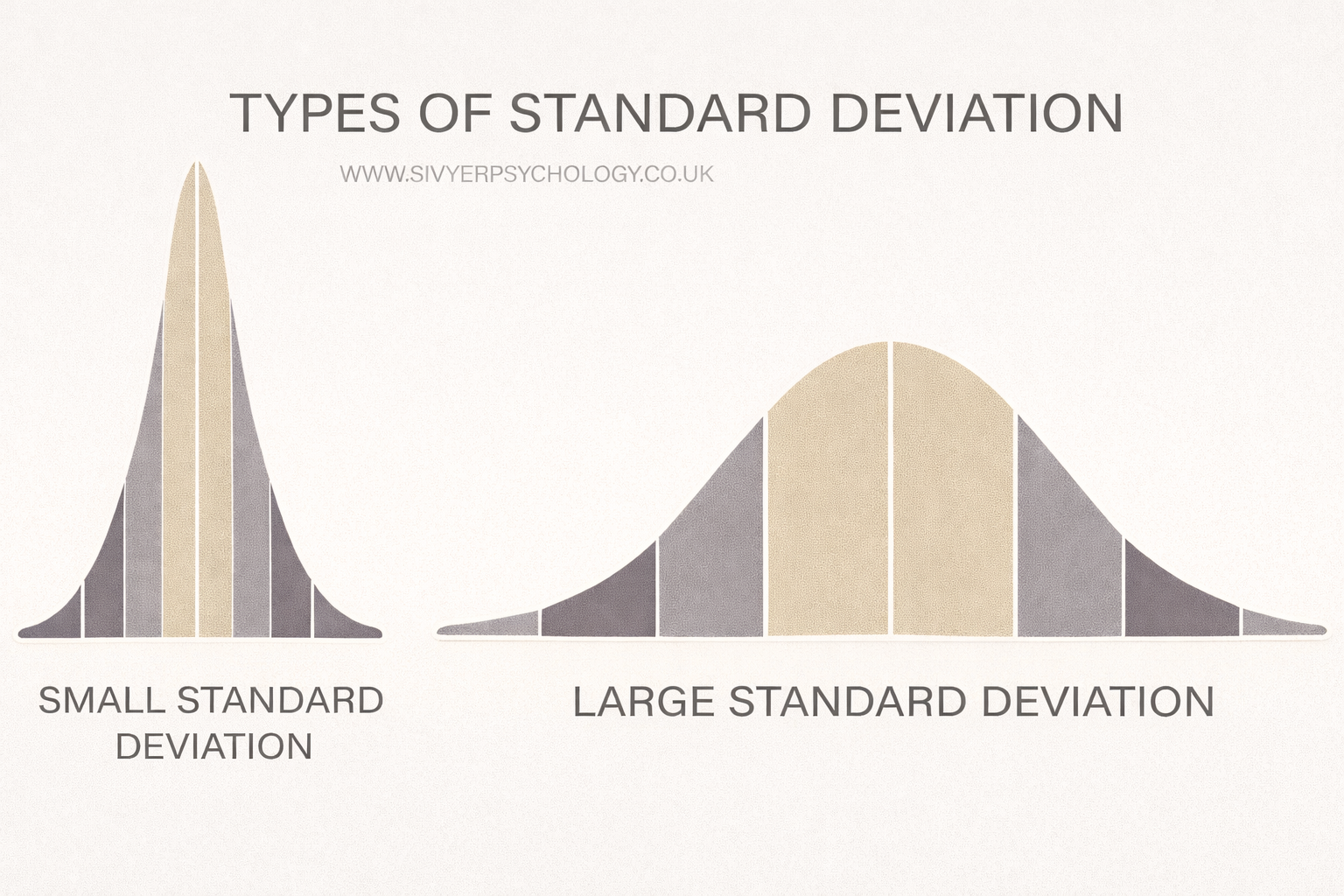

THE SIZE OF THE STANDARD DEVIATION MATTERS

Standard deviation is like a measuring tape for how spread out the numbers are in a data set. Imagine you have many points on a line. If they’re all huddled close together, there’s not much difference between them, so the standard deviation is small. But if they’re scattered all over the place, far from each other, then the standard deviation is large because there’s a lot of variety in the numbers.

It’s a way to see how much the scores differ from the average or the “normal” amount. So if everyone in your class scores similarly on a test, the standard deviation will be low. But if the scores are all over the map, the standard deviation will be high, showing a big range in how everyone did.

The size of the standard deviation tells us how consistent or variable the scores are.

A small standard deviation means the scores are tightly clustered around the mean. Most participants gave very similar ratings, so the data is highly consistent. For example, if all 20 students in a class scored between A and A* on a test, the standard deviation would be small.

A large standard deviation means the scores are widely spread out. Some participants gave very high ratings while others gave very low ratings, showing low consistency and high variability. For example, if the same 20 students scored anywhere from A* down to a U, the standard deviation would be large.

When the results are tightly grouped around the mean, the bell-shaped curve is steep and narrow, and the standard deviation is small. When the results are widely spread, the bell curve is relatively flat and wide, and the standard deviation is large.

In short, the standard deviation does not just tell us the average spread — it tells us how reliable or predictable the results are. A small SD suggests the findings are fairly stable across participants, whereas a large SD indicates the results are more variable and less consistent.

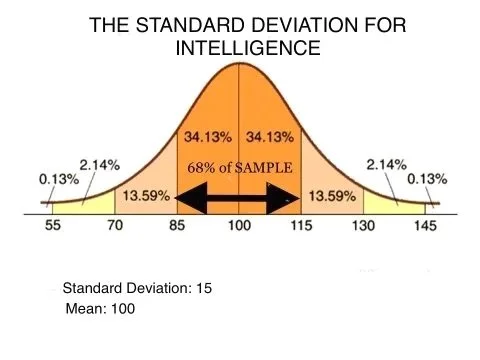

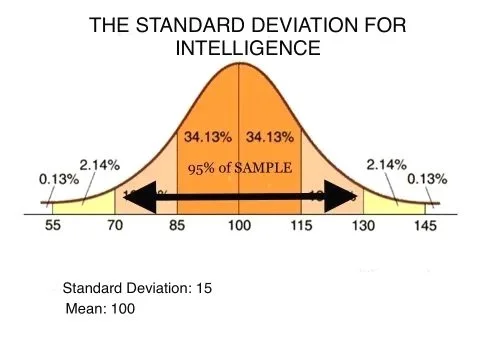

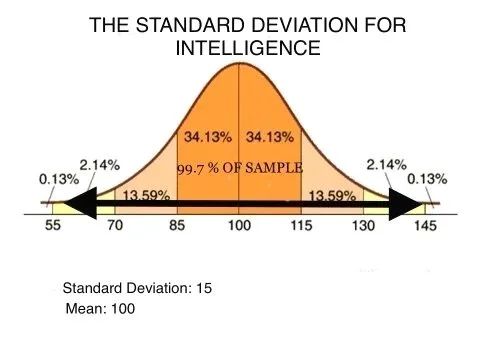

STANDARD DEVIATION EXAMPLE WITH IQ

WHAT IQ TEST SCORES MEANWHAT IQ TEST SCORES MEAN

IQ tests are usually standardised with a mean of 100 and a standard deviation of 15. The scores below are based on the most commonly used classifications (e.g., Wechsler scales). Percentages show roughly what proportion of the population falls into each range.

145 and above – Very Gifted / Highly Advanced (less than 0.1% of the population). This is the range often associated with exceptional intellectual ability. Historical estimates for figures like Albert Einstein are around 160. Claims of extremely high scores (such as Marilyn vos Savant at 228 or Ainan Cawley at 263) are controversial, often based on non-standard or unverified testing methods, and are not widely accepted by psychologists.

130–144 – Very Superior (approximately 2.1% of the population). This level is typical of many scientists, inventors, and highly accomplished professionals.

120–129 – Superior (approximately 6.4% of the population). Many people with PhDs or professional doctorates (e.g., MDs) have IQs in or near this range. 125 is sometimes cited as an approximate average for those holding advanced doctoral degrees.

111–119 – High Average (approximately 15.7% of the population). 111–115 is often cited as roughly the average IQ for students successfully completing A-levels.

90–110 – Average (approximately 51.6% of the population). This is the middle range for the general population, with 100 being the exact mean.

80–89 – Low Average (approximately 13.7% of the population)

70–79 – Borderline / Educational Needs (approximately 6.4% of the population). At an IQ of around 75, there is roughly a 50% chance of successfully reaching Year 9 (KS3) without significant additional support.

Below 70 – Extremely Low / Intellectual Disability (approximately 2.3% of the population). This range indicates a significant intellectual disability. For example, the average IQ for individuals with Down syndrome is typically between 30 and 70.

Below 20–25 – Profound Intellectual Disability People in this range often have profound and multiple learning difficulties, frequently combined with physical disabilities, sensory impairments, and complex medical needs. They require substantial lifelong support.

IQ SDS

68% of the population has an IQ between 85 and 115

95% of the population has an IQ between 70 and 130

99.7% of the population has an IQ between 55 and 145

FOOTNOTES:

Percentiles are approximate and based on the normal distribution.

"Genius" is not an official clinical category on modern IQ tests (Wechsler, Stanford-Binet, etc.). Terms like "Very Superior" or "Highly Gifted" are preferred.

Extremely high IQ claims (228, 263) are heavily disputed and not accepted as reliable by most psychologists.

Average IQ for PhDs/MDs is typically reported in the 115–125 range

PLOTTING STANDARD DEVIATION ON A BELL CURVE

Once you have calculated the standard deviation, you only need two pieces of information to plot it on a normal distribution curve: the mean and the standard deviation.

For example, with IQ scores, the mean is 100 and the standard deviation is 15. You place the mean at the centre peak of the bell curve. Then you subtract the standard deviation from the left of the mean and add it to the right of the mean:

Mean – 1 SD = 100 – 15 = 85

Mean + 1 SD = 100 + 15 = 115

This shows that 68% of scores fall between 85 and 115, 95% between 70 and 130, and 99.7% between 55 and 145.

With just the mean and the standard deviation, you can mark the intervals clearly on the bell curve by subtracting the SD to the left and adding it to the rig

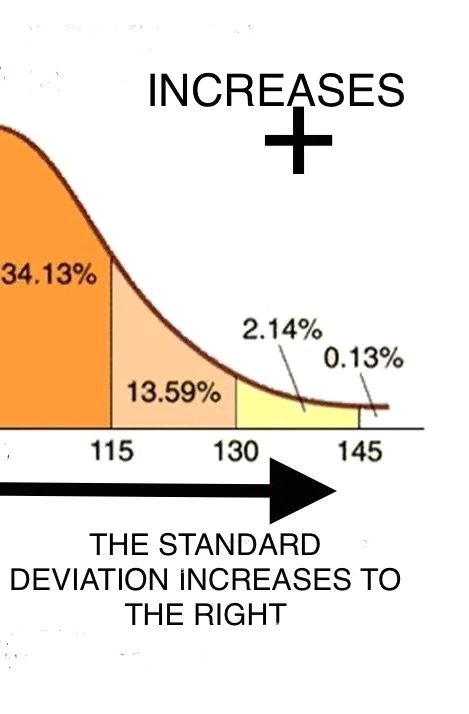

THE STANDARD DEVIATION TO THE RIGHT (BELOW THE MEAN)

THE STANDARD DEVIATION TO THE RIGHT (ABOVE THE MEAN)

Because the normal distribution is symmetrical, we can break down the percentages on the right side of the mean (above average IQ). Here’s what the standard deviation lines tell us:

1 STANDARD DEVIATION ABOVE THE MEAN

68% of people have an IQ between 85 and 115. Half of that 68% lies on the right side of the mean. → 34.1% of the population has an IQ between 100 and 115.

2 STANDARD DEVIATIONS ABOVE THE MEAN

95% of people have an IQ between 70 and 130. Half of that 95% lies on the right side. → 47.5% of the population has an IQ between 100 and 130.

Subtracting the previous section gives us: 47.5% – 34.1% = 13.4% of people have an IQ between 115 and 130.

3 STANDARD DEVIATIONS ABOVE THE MEAN

99.7% of people have an IQ between 55 and 145. Half of that 99.7% lies on the right side. → 49.85% of the population has an IQ between 100 and 145.

Breaking it down further:

Between 115 and 130 = 13.4%

Between 130 and 145 = 49.85% – 34.1% – 13.4% = 2.35%

Finally, the extreme upper tail: People with an IQ above 145 = 0.15% of the population.

SUMMARY (EASY TO REMEMBER)

100 – 115 → 34.1%

115 – 130 → 13.4%

130 – 145 → 2.35%

Above 145 → 0.15%

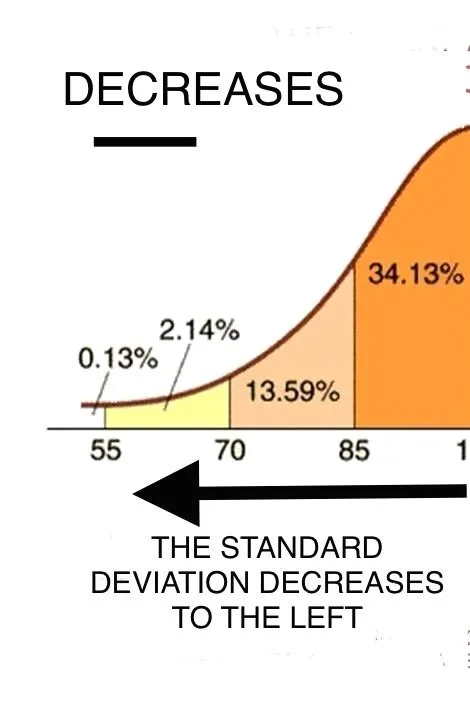

THE STANDARD DEVIATION TO THE LEFT (BELOW THE MEAN)

Because the normal distribution is symmetrical, we can break down the percentages on the left side of the mean (below average IQ). Here’s what the standard deviation lines tell us:

1 STANDARD DEVIATION BELOW THE MEAN

68% of people have an IQ between 85 and 115. Half of that 68% lies on the left side of the mean. → 34.1% of the population has an IQ between 85 and 100.

2 STANDARD DEVIATIONS BELOW THE MEAN

95% of people have an IQ between 70 and 130. Half of that 95% lies on the left side. → 47.5% of the population has an IQ between 70 and 100.

Subtracting the previous section gives us: 47.5% – 34.1% = 13.4% of people have an IQ between 70 and 85.

3 STANDARD DEVIATIONS BELOW THE MEAN 99.7% of people have an IQ between 55 and 145. Half of that 99.7% lies on the left side. → 49.85% of the population has an IQ between 55 and 100.

Breaking it down further:

Between 70 and 85 = 13.4%

Between 55 and 70 = 49.85% – 34.1% – 13.4% = 2.35%

Finally, the extreme lower tail: People with an IQ below 55 = 0.15% of the population.

SUMMARY (EASY TO REMEMBER)

85 – 100 → 34.1%

70 – 85 → 13.4%

55 – 70 → 2.35%

Below 55 → 0.15%

CALCULATION OF SD IN IQ

To clarify: It's stated that 99% of people have an IQ between 55 and 145.

Given the assumption of a normal distribution, we aim to find the mean and standard deviation.

The mean is the midpoint of 55 and 145, calculated as:

Mean = (55 + 145) / 2 = 100

Considering 99% of the data falls within three standard deviations from the mean (spanning six standard deviations in total):

One standard deviation is calculated by dividing the range (145 - 55) by 6

Standard Deviation = (145 - 55) / 6 = 90 / 6 = 15

APPLIED EXAMPLE OF ANALYSING A STANDARD DEVIATION

COMPARING SLIMMER'S WORLD AND WEIGHT WATCHER DIETS

THE UTILITY OF STANDARD DEVIATIONS: Standard deviations can be crucial in understanding the variability between groups

Imagine the following scenario:

“You're looking to shed some pounds and have learned that being part of a support group, like Weight Watchers or Slimming World, boosts your odds of success. But are you faced with the choice, which one do you pick? Naturally, you aim to join the group with the most impressive outcomes. Embracing your inner nerd, you request descriptive statistics to verify their success stories and receive weight loss data for 5,000 dieters.”

OPTION 1: *SLIMMING WORLD/Pounds in weight lost during 06/26 - 08/26/ SD = 8 /MEAN = 20

OPTION 2: *WEIGHT WATCHERS: Pounds in weight lost during 06/26 - 08/26/SD = 2/MEAN = 10

*Disclaimer: Please be aware that the data provided above is entirely fictional, and I possess no knowledge of the actual performance, success, or failure rates of Weight Watchers or Slimming World. Nonetheless, it's worth noting that participating in a support group is generally advantageous.

APPLIED EXAMPLE: ANALYSING STANDARD DEVIATION

COMPARING SLIMMING WORLD AND WEIGHT WATCHERS

Standard deviation is extremely useful when comparing variability between different groups or conditions. Imagine you want to lose weight and are deciding between Slimming World and Weight Watchers. You request descriptive statistics from 5,000 dieters over a three-month period.

SLIMMING WORLD Mean weight loss = 20 pounds Standard Deviation = 8

WEIGHT WATCHERS Mean weight loss = 10 pounds Standard Deviation = 2

QUESTIONS

Which diet would you choose and why?

Why do you think Slimming World had such a large standard deviation while Weight Watchers had a very small one?

WHY A SMALL STANDARD DEVIATION MATTERS

A small standard deviation is a strong positive sign in real-world interventions such as diets.

It tells us that the results are highly consistent and predictable. Most people achieve a very similar outcome. This suggests the programme is clear, easy to follow correctly, and works reliably for the majority of participants. In this example, Weight Watchers has a very small standard deviation (SD = 2). This means almost all participants lost roughly the same amount of weight. The programme produced stable, dependable results for thousands of people. In contrast, Slimming World has a large standard deviation (SD = 8). This shows high variability. Some people lost a great deal of weight, but many others lost very little or even gained weight.

INTERNAL VALIDITY ISSUE

The large standard deviation observed in Slimming World raises serious concerns about the diet's internal validity. It suggests that Slimming World may be less valid as a weight-loss programme because it is harder for most people to follow consistently. Possible reasons include:

More complicated or confusing rules

Foods that are harder to find in supermarkets

A programme that requires more planning and effort

Because of this, some people follow it very strictly and lose a lot of weight, while many others struggle to stick to it properly and see poor results. This creates the spread of outcomes. Weight Watchers, with its much smaller standard deviation, appears to be a more valid and easier-to-follow diet for most people. The low variability suggests clearer guidelines and better consistency across participants.

KEY EXAM POINT

When comparing two conditions, never judge by the mean alone. A small standard deviation usually indicates a more reliable, consistent, and internally valid intervention. A large standard deviation often signals that one condition is harder to implement properly, which reduces its internal validity

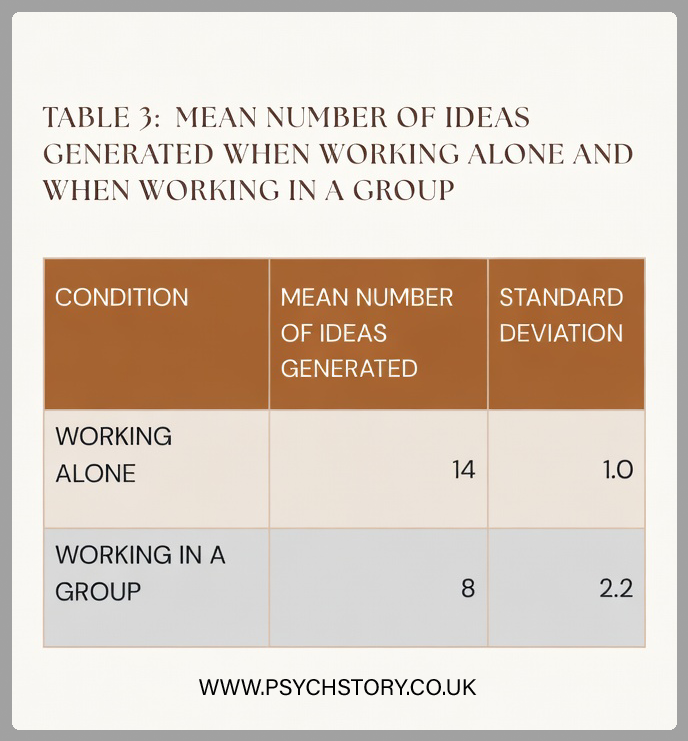

AQA EXAM QUESTION: STANDARD DEVIATIONS

A psychologist believed that people generate more new ideas on their own than in groups, and that the belief that people are more creative in groups is false. To test this idea, he arranged for 30 people to participate in a study to generate ideas for boosting tourism. Participants were randomly allocated to one of two groups. Fifteen of them were asked to work individually and generate as many ideas as possible to boost tourism in their town. The other fifteen participants were divided into three groups, and each group was asked to "brainstorm" to generate as many ideas as possible to boost tourism in their town. The group "brainstorming” sessions were recorded, and the number of ideas generated by each participant was noted. The psychologist used a statistical test to determine whether there was a significant difference in the number of ideas generated by participants working alone versus in groups. A significant difference was found at the 5% level for a two-tailed test (p ≤ 0.05).”

TABLE 3: MEAN NUMBER OF IDEAS GENERATED WHEN WORKING ALONE AND WHEN WORKING IN A GROUP

QUESTION: Concerning the data in Table 3, outline and discuss the findings of this investigation. (10 marks)AO1 = 3-4 marks and AO2/3 = six marks:

Exam hint: Questions regarding the interpretation of standard deviation values are often worth several marks, so linking your answer to the question rather than just pointing out how they differ is essential. Tell the examiner what these scores tell you about the data!

ESSAY ADVICE

AO1 = 3-4 marks: Outline the findings of the investigation

STEP 1: Look at the means

Participants working alone produced a mean of 14 ideas.

Participants working in groups produced a mean of 8 ideas.This shows that, on average, individuals working alone generated almost twice as many ideas as those working in groups.

STEP 2: Work out and interpret the standard deviations

You only need two numbers: the mean and the standard deviation.

You then move outwards from the mean in both directions.

GRAPH A: MEAN NUMBER OF IDEAS GENERATED WHEN WORKING ALONE

GRAPH A: WORKING ALONE

STANDARD DEVIATION = 1

MEAN = 14

RIGHT SIDE

14 + 1 = 15 (1 SD)

15 + 1 = 16 (2 SD)

16 + 1 = 17 (3 SD)

LEFT SIDE

14 − 1 = 13 (1 SD)

13 − 1 = 12 (2 SD)

12 − 1 = 11 (3 SD)

THIS MEANS

68% of participants scored between 13 and 15 ideas

95% of participants scored between 12 and 16 ideas

99.7% of participants scored between 11 and 17 ideas

This is a very tight spread. Most participants produced a very similar number of ideas.

GRAPH B: MEAN NUMBER OF IDEAS GENERATED WHEN WORKING IN A GROUP

GRAPH B: WORKING IN A GROUP

STANDARD DEVIATION = 2.2

MEAN = 8

RIGHT SIDE

8 + 2.2 = 10.2 (1 SD)

10.2 + 2.2 = 12.4 (2 SD)

12.4 + 2.2 = 14.6 (3 SD)

LEFT SIDE

8 − 2.2 = 5.8 (1 SD)

5.8 − 2.2 = 3.6 (2 SD)

3.6 − 2.2 = 1.4 (3 SD)

THIS MEANS

68% of participants scored between 5.8 and 10.2 ideas

95% of participants scored between 3.6 and 12.4 ideas

99.7% of participants scored between 1.4 and 14.6 ideas

This is a much wider spread. Some participants produced very few ideas, while others produced many more.

ADVICE ON EVALUATING THE GROUP STANDARD DEVIATIONS

A number of different approaches can be used to structure a response to this question.

A strong answer should begin by clearly summarising the study's main findings. The most obvious starting point is the difference in the means. Participants working alone produced a mean of 14 ideas, compared with 8 in the group condition. This indicates that individuals working alone generated almost twice as many ideas as those working in groups. However, the mean alone does not provide a complete picture of the data. The discussion should therefore move beyond the mean and examine the standard deviation. Standard deviation shows how spread out the scores are around the mean and therefore tells us how consistent the results were within each condition.

In this study, the individual condition had a very small standard deviation (SD = 1), showing that most participants produced a similar number of ideas. In contrast, the group condition had a larger standard deviation (SD = 2.2), indicating much greater variability in performance. The greater spread of scores in the group condition suggests that participants differed considerably in the number of ideas they generated.

A key issue to consider is what this difference in variability might mean. A larger standard deviation suggests that performance in the group condition was less consistent. Some participants produced many ideas, while others produced very few. This raises questions about the reliability and internal validity of the group condition, as the task may not have been executed consistently across all participants.

When analysing the findings, it is important to refer directly to the distribution of scores. For example, in the individual condition, approximately 99.7% of participants produced between 11 and 17 ideas, showing a very tight clustering of results. In contrast, scores in the group condition ranged far more widely, with approximately 99.7% of participants producing between about 1.4 and 14.6 ideas. This large spread suggests that group performance was far less predictable.

Several methodological factors could explain this increased variability. Group brainstorming may introduce additional influences such as social inhibition, unequal participation, or dominant individuals controlling the discussion. These factors can produce inconsistent levels of idea generation across participants.

Therefore, the difference in both the means and the standard deviations must be considered together. While the mean suggests that individuals working alone generated more ideas, the standard deviation shows that their performance was also far more consistent. The much greater variability in the group condition may reflect difficulties in implementing the brainstorming task consistently, which raises questions about the internal validity of the group condition

EXEMPLAR ANSWER TO THE GENERATING IDEAS QUESTION

In the 'working alone' condition, the mean was 14, nearly double the mean of 8 observed in the 'working in a group' condition. This indicates that participants working alone produced significantly more ideas for generating tourism. However, means alone do not account for outliers or the distribution of scores. It is conceivable that a small number of very high-scoring individuals in the solo condition inflated the mean, or that particularly low scores in one group depressed it. This highlights a limitation of using the mean in isolation when interpreting performance.

The standard deviation (SD) provides a more nuanced understanding by measuring variation from the mean. The SD in the "working alone" condition was 1, which is smaller than that in the "working in a group" condition (SD = 2.2), indicating a tighter clustering of scores around the mean. A smaller SD reflects greater consistency among participants’ responses, suggesting higher internal reliability within that condition, as performance is more stable and less influenced by extraneous variation. This consistency strengthens the internal validity of the findings, as it suggests that the independent variable (working alone) had a more uniform effect on the dependent variable (number of ideas generated).

Specifically, approximately 68% of participants in the working alone condition generated between 13 and 15 ideas, whereas 68% of participants in the group condition produced between 5.8 and 10.2 ideas. This demonstrates that scores in the individual condition were tightly clustered, reflecting high consistency. In contrast, the larger standard deviation in the group condition shows a much wider spread of scores, indicating lower consistency in performance. This greater variability reduces the internal reliability of the group condition, as participants did not respond uniformly, and it weakens internal validity, as it becomes less clear whether differences in performance are due solely to the independent variable or to uncontrolled situational factors.

The greater variability in the group condition likely reflects differences in group dynamics, making performance less predictable. Some individuals may contribute less due to social inhibition or lack of confidence, whereas others may dominate the discussion, leading to uneven participation. This introduces participant interaction effects as a confounding variable, reducing internal validity. As a result, the group condition may lack standardisation, meaning the task was not experienced in the same way by all participants, further weakening reliability.

A significant methodological limitation is the lack of a clear operationalisation of what constitutes an "idea," leading to inconsistent counts of valid responses. The study measures creativity solely through the quantity of ideas generated. This ambiguity reduces construct validity, as creativity is a multi-dimensional construct involving originality, usefulness, and flexibility, not just number. It is possible that participants working in groups generated fewer but more developed or higher-quality ideas, which this measure fails to capture. Consequently, although the mean suggests individuals produced more ideas, the study may lack construct validity in its measurement of creativity, meaning it cannot confidently claim that individuals were more creative, only that they produced more ideas.

CALCULATION OF PERCENTAGES

Providing percentages in the summary of a dataset can help the reader get a feel for the data at a glance without needing to read all of the results. For example, if two conditions compare the effects of revision vs no revision on test scores, a psychologist could report the percentage of participants who performed better after revising to provide a rough idea of the study's findings. Let’s imagine that out of 45 participants, 37 improved their scores by revising.

To calculate a percentage, the following calculation would be used:

Number of participants who improved × 100 Total number of participants

The bottom number in the formula should always be the total number in question (such as the total number of participants or the total possible score), with the top number being the number that meets the specific criteria (such as participants who improved or a particular score achieved). This answer is then multiplied by 100 to provide the percentage.

CALCULATION OF PERCENTAGE INCREASE

First, the difference between the two numbers being compared must be calculated to determine the percentage increase. Then, the increase should be divided by the original figure and multiplied by 100 (see example calculation below).

For example, A researcher was interested in investigating the effect of listening to music on the time to read a text passage. When participants were asked to read with music playing in the background, the average time to complete the activity was 90 seconds. When participants undertook the activity without music, the average time to complete the reading was 68 seconds.

Calculate the percentage increase in the average (mean) time to read a text passage when listening to music. Show your calculations. (4 marks)

Increase = new number – original number Increase = 90 – 68 = 22

% increase = increase ÷ original number × 100 % increase = 22 ÷ 68 × 100

22/78 = 0.3235

0.3235 × 100 = 32.35%

CALCULATION OF PERCENTAGE DECREASE

First, the difference between the two numbers must be calculated to determine the percentage decrease. Then, the decrease should be divided by the original figure and multiplied by 100 (see example calculation below).

For example, A researcher was interested in investigating the effect of chewing gum on the time taken to tie shoelaces. When participants were asked to tie a pair of shoelaces in trainers whilst chewing gum, the average time to complete the task was 20 seconds. When participants undertook the activity without chewing gum, the average time to tie the shoelaces was 17 seconds.

Calculate the percentage decrease in the average (mean) time taken to tie shoelaces when not chewing gum. Show your calculations. (4 marks)

Decrease = original number – new number Decrease = 20 – 17 = 3

% decrease = decrease ÷ original number × 100 % decrease = 3 ÷ 20 × 100

3/20 = 0.15

0.15 × 100 = 15%

POSSIBLE EXAMINATION QUESTIONS

Name one measure of central tendency. (1 mark)

Exam Hint: As this question asks for a measure of central tendency to be named, no further elaboration is required to gain the mark here.

Which of the following is a measure of dispersion? (1 mark)

Mean

Median

Mode

Range

Calculate the mode for the following data set: 10,2,7,6,9,10,11,13,12,6,28,10. (1 mark)

Calculate the mean from the following data set. Show your workings: 4, 2, 8, 10, 5, 9, 11, 15, 4, 16, 20 (2 marks)

Explain the meaning of standard deviation as a measure of dispersion. (2 marks)

Other than the mean, name one measure of central tendency and explain how you would apply this to a data set. (3 marks)

Exam Hint: It is vitally important to take time to read the question fully to ensure that a description of calculating the mean is also presented.

Explain why the mode is sometimes a more appropriate measure of central tendency than the mean. (3 marks)

Explain one strength and one limitation of the range as a measure of dispersion. (4 marks)

Evaluate the use of the mean as a measure of central tendency. You may refer to strengths and limitations in your response. (4 marks)

A researcher was interested in investigating the number of minor errors made by male and female learner drivers on their driving tests. Ten males and ten females agreed to have their driving test performance submitted to the researchers. The table below depicts the findings: