VALIDITY

VALIDITY AND RELIABILITY

Reliability and validity underpin everything that psychologists do – without them, research would be worthless

The principles of validity and reliability are fundamental cornerstones of the scientific method.

Together, they are at the core of what is accepted as scientific proof by scientists and philosophers alike. By following a few basic principles, any experimental design will withstand rigorous questioning and scepticism.

SPECIFICATION:

Types of validity across all methods of investigation: face validity, concurrent validity, ecological validity and temporal validity. Assessment of validity. Improving validity.

WHAT IS VALIDITY

Validity refers to the accuracy, truthfulness and meaningfulness of psychological research or measurement. A study, test or concept is valid if it genuinely measures or represents what it claims to measure.

Psychology frequently investigates constructs that are not directly observable, such as intelligence, attachment, depression or love. Because these cannot be physically measured, psychologists create operational definitions and measurement tools, for example, IQ tests, the Strange Situation, or clinical rating scales such as the Global Assessment of Functioning. Validity asks whether these tools actually capture the construct they are supposed to measure. The central question becomes: does an IQ test genuinely measure intelligence, or does it instead measure education level, cultural knowledge or test-taking ability?

Validity also applies to psychological definitions themselves. Psychologists must decide whether diagnostic categories such as schizophrenia, ADHD or oppositional defiant disorder represent genuine psychological conditions or whether they are labels applied to behaviours that society finds problematic. In this sense, validity concerns whether psychological concepts correspond to something real rather than being artificial classifications. For example, the debate around oppositional defiant disorder asks whether it reflects a distinct disorder or simply extreme naughtiness interpreted through a clinical framework.

Validity determines whether research findings can be believed. Consider asking 100 students face-to-face whether they pick their nose and receiving 100 “no” responses. The results appear clear, but they are unlikely to be valid because participants may lie to protect their social image. The study, therefore, measures social desirability rather than genuine behaviour. This example shows how response bias can undermine validity even when the data appear straightforward.

Formally, validity is the extent to which research methods, measurements and conclusions accurately represent the psychological reality they are intended to investigate. Without validity, results lack scientific meaning because they do not truthfully answer the research question

VALIDITY AFFECTS RELIABILITY

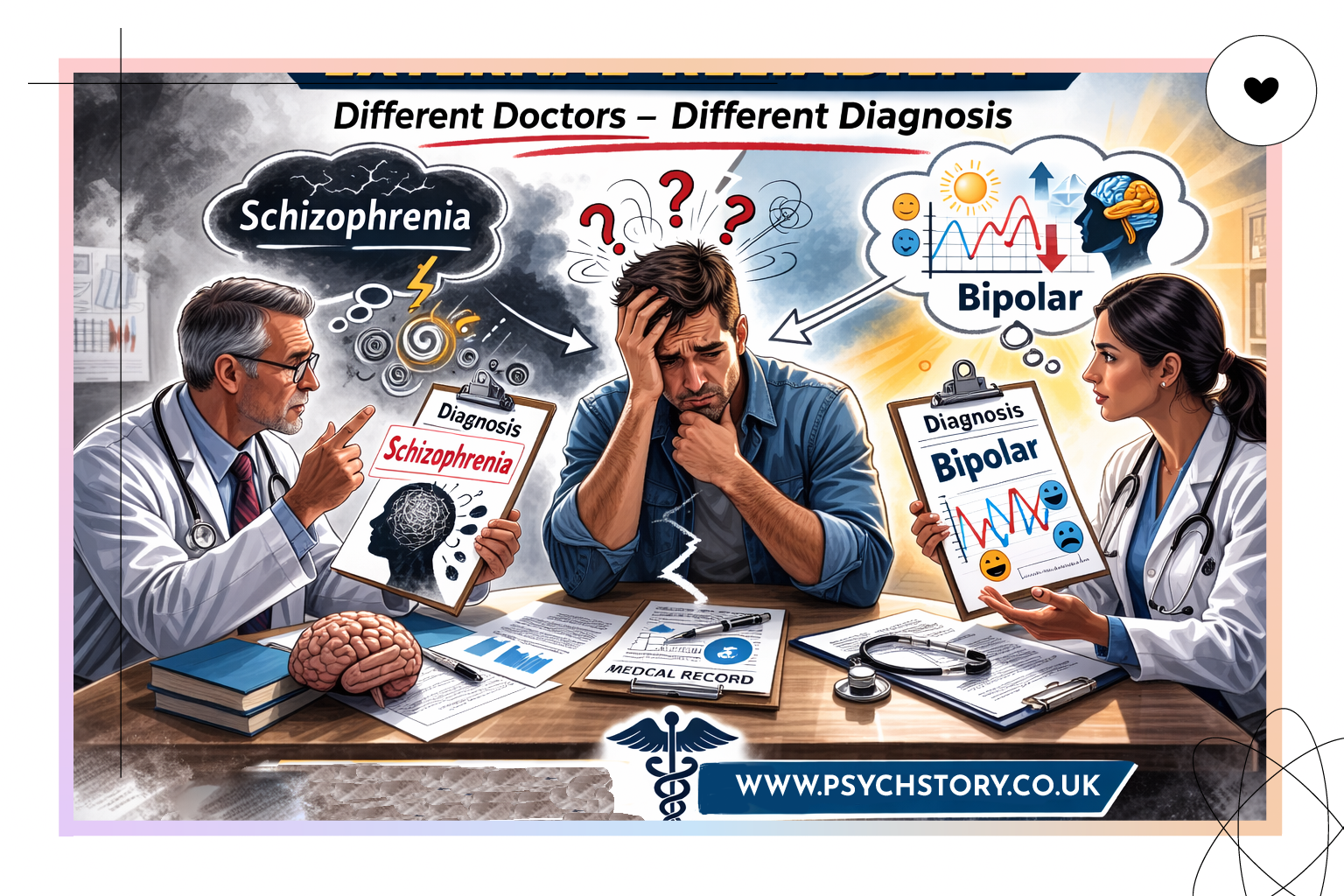

Validity affects reliability because reliability depends on a clear, valid construct to measure. If a concept is not valid or lacks a stable definition, it cannot be measured consistently. For example, if schizophrenia changes definition over time or lacks a concrete meaning, clinicians cannot reliably diagnose it because they may be identifying different things under the same label. Diagnostic disagreement may therefore reflect problems with the construct's validity rather than poor reliability among doctors.

In simple terms, you cannot expect consistent measurement of something that is not clearly defined. It is like using broken scales and expecting them to weigh the same as someone else’s. If the measure itself is invalid, reliable results are unlikely.

THEER ARE TWO MAIN TYPES OF VALIDITY

EXTERNAL VALIDITY: Everything outside the research study, e.g., Population, ecological, temporal, and mundane realism.

INTERNAL VALIDITY: Anything inside the research that does not measure what it is supposed to measure, for example, tests, questionnaires, instruments, and scales.

WHAT IS EXTERNAL VALIDITY?

Can the results be applied outside of the experiment? Are they valid?

External validity is one of the most difficult validity types to achieve and is at the foundation of every good experimental design. Many scientific disciplines, especially the social sciences, face a long battle to demonstrate that their findings reflect the wider population in real-world settings. The main criterion of external validity is generalisability, and whether results obtained from a small sample, often in laboratory settings, can be extended to predict the entire population. In 1966, Campbell and Stanley proposed the commonly accepted definition of external validity.

“External validity asks the question of generalisability: To what populations, settings, treatment variables and measurement variables can this effect be generalised?”

External validity is usually split into two distinct types, population validity and ecological validity, and they are both essential elements in judging the strength of an experimental design.

In a nutshell……Can the findings be generalised beyond the context of the research situation?

TYPES OF EXTERNAL VALIDITY

POPULATION VALIDITY

Population validity asks whether we can generalise from the sample to the population.

A sample is a small subset taken from a population. Researchers collect data from the sample and then use those findings to make inferences about the wider population. The population is the entire group we are trying to understand. The issue is therefore whether the people studied are sufficiently representative of the wider group to justify those inferences.

The question is not about numbers but about whether the sample reflects the kinds of people found in real society. Does the sample include variation in age, culture, gender, social class, ethnicity, family structure, ability and life experience? If not, conclusions may only describe that particular group rather than people generally.

Milgram’s obedience study clearly illustrates the problem. Participants were American male volunteers from a specific cultural and historical context, yet the findings are often treated as statements about human obedience more broadly. Population validity asks whether behaviour demonstrated by that sample can legitimately be applied to women, different cultures, or different historical periods where attitudes toward authority may differ.

Zimbardo’s Stanford Prison Experiment raises a similar issue. Participants were psychologically screened, and male students were placed in an unusual simulated environment. Generalising from students temporarily role-playing to behaviour in real prison systems assumes that the sample represents populations who live and work within prisons, which may not be justified.

Population validity, therefore, concerns the legitimacy of moving from what was observed in the sample to claims about the wider population. If the sample does not adequately reflect that population, generalisation becomes weak even if the study itself is well conducted

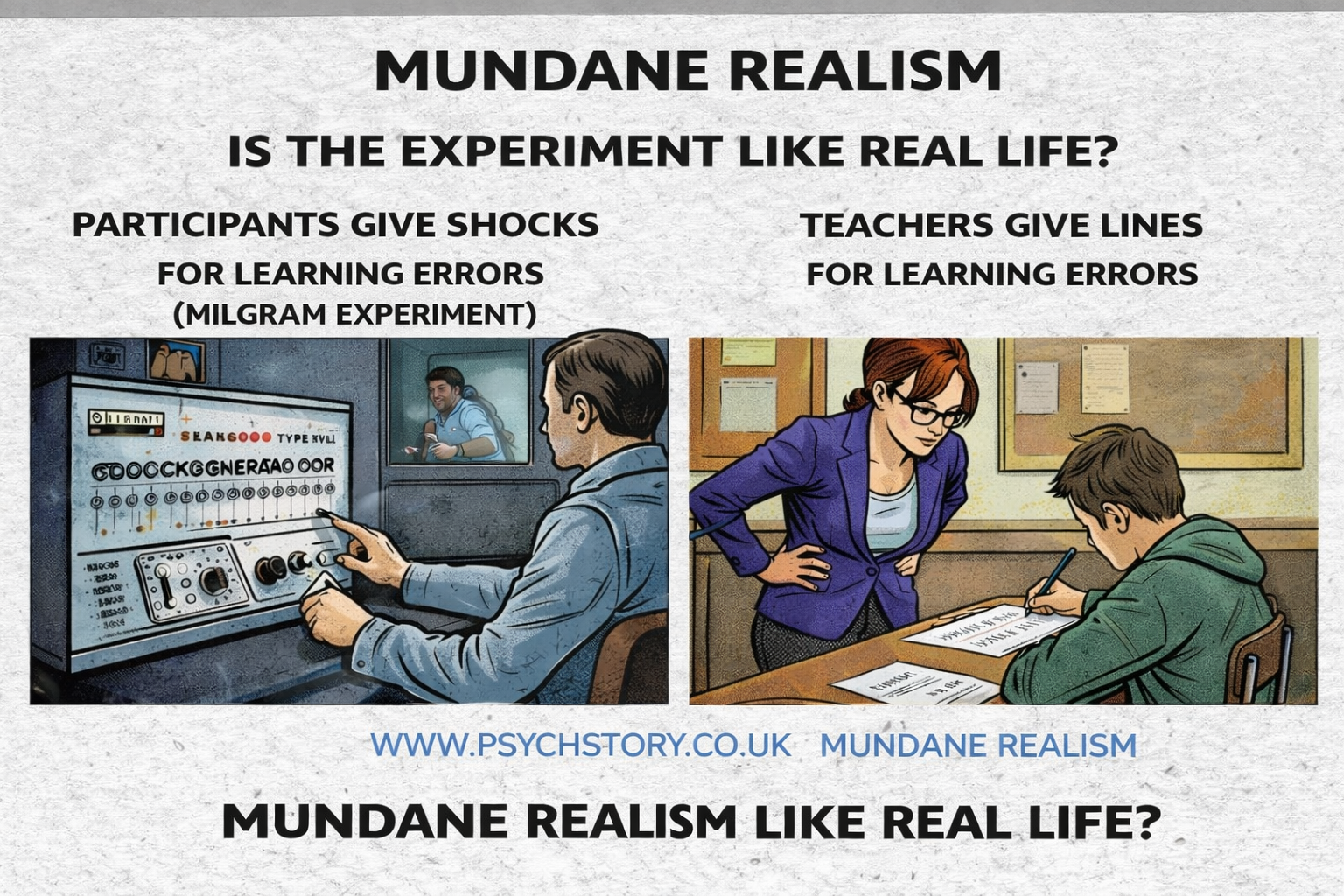

MUNDANE REALISM:

Mundane realism simply asks: Does the activity resemble ordinary life behaviour?

Mundane realism refers to whether the situation and task used in a laboratory study resemble real-life behaviour. It asks a simple question: can behaviour observed under artificial laboratory conditions realistically tell us how people would behave outside the experiment?

Many psychological studies take place in highly controlled laboratory environments. Memory experiments, for example, often require participants to learn lists of words or long strings of digits while seated in quiet testing rooms. Likewise, Milgram’s obedience study was conducted in the laboratories of Yale University. The issue raised by mundane realism is whether behaviour produced in such artificial situations reflects behaviour in everyday life.

Milgram provides a clear illustration. His study lacked mundane realism because people do not give another person a fatal electric shock simply because that person got a word pair wrong. The situation was deliberately artificial. Milgram had to be inventive because he was attempting to investigate unjust obedience, yet he could not ethically recreate the real historical circumstances associated with the Holocaust. He therefore accepted that the task itself did not resemble real life.

This is where mundane realism is often confused with ecological validity. Ecological validity refers not to whether the task looks realistic, but to whether the findings can be applied to real-world situations. A study may lack mundane realism yet still possess ecological validity if the psychological processes identified operate beyond the laboratory.

Miller’s “magic number seven” research demonstrates this distinction. Memorising long digit strings lacks mundane realism because people are rarely motivated to learn meaningless numbers unless placed in a real situation, such as needing to remember a phone number of a date after losing access to their phone. Despite this, research consistently shows that short-term memory capacity is approximately 7 items, plus or minus 2. The task is artificial, yet the finding applies broadly to everyday memory, meaning ecological validity remains strong even though mundane realism is low.

Milgram argued similarly that although his study lacked mundane realism, it still possessed ecological validity because obedience to authority may operate in real-life social hierarchies. However, this claim can be challenged. In Milgram’s proximity condition, participants were less willing to administer shocks when the learner was face-to-face, suggesting empathy reduces obedience. In real Nazi Germany, proximity to Jewish victims did not reliably lead SS soldiers to refuse participation. If real-world behaviour does not match laboratory findings, one could argue that Milgram’s study lacks ecological validity and mundane realism.

It is therefore essential to maintain the distinction. Mundane realism concerns whether the experimental task resembles everyday life. Ecological validity concerns whether the conclusions drawn from the study apply to everyday life. A study may have one without the other, both, or neither

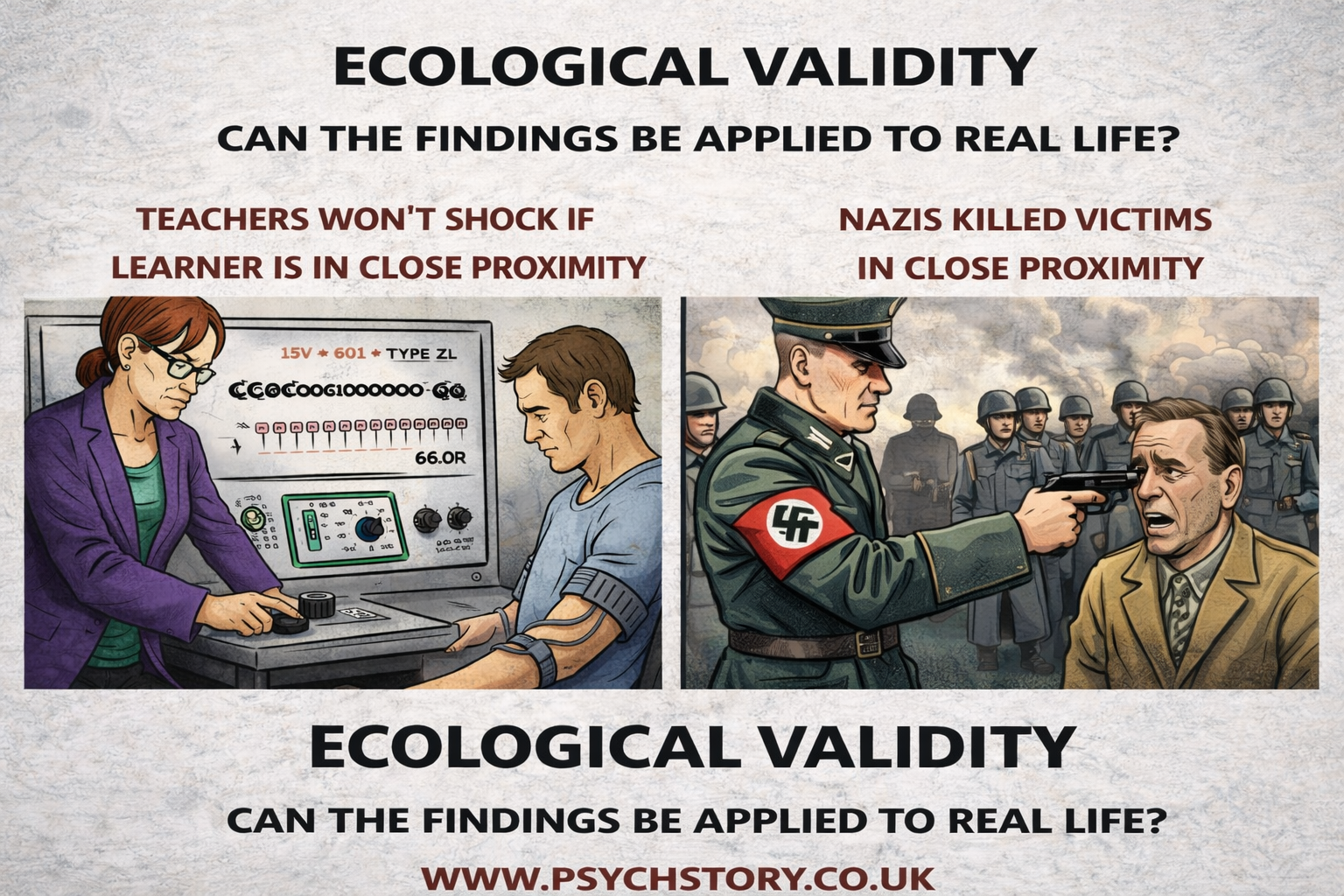

ECOLOGICAL VALIDITY (CONTRAST WITH MUNDANE REALISM)

Ecological validity refers to whether a study's findings can be applied to real-life situations, even if the experimental task itself is artificial and does or does not lack mundane realism

This is where students and teachers often confuse concepts.

A study can lack mundane realism but still possess ecological validity if the psychological processes identified operate in real-world contexts.

Milgram argued precisely this. Although giving electric shocks lacks mundane realism, he believed the underlying psychological mechanisms of obedience to authority could explain behaviour outside the laboratory. The study was artificial in form but intended to reveal genuine social processes.

However, ecological validity can also be questioned. In Milgram’s proximity condition, obedience decreased when participants were physically close to the learner, suggesting empathy reduces harmful obedience. Yet in real Nazi Germany, proximity to victims did not consistently lead SS soldiers to refuse participation. Physical closeness did not reliably trigger empathy or resistance. This poses a challenge to ecological validity because real-world behaviour does not fully align with laboratory findings.

Therefore, Milgram may be interpreted as lacking mundane realism and possibly ecological validity depending on how a real-world comparison is made.

The distinction must remain clear:

Mundane realism = does the task look like real life?

Ecological validity = do the findings apply to real life?

They are related but not identical.

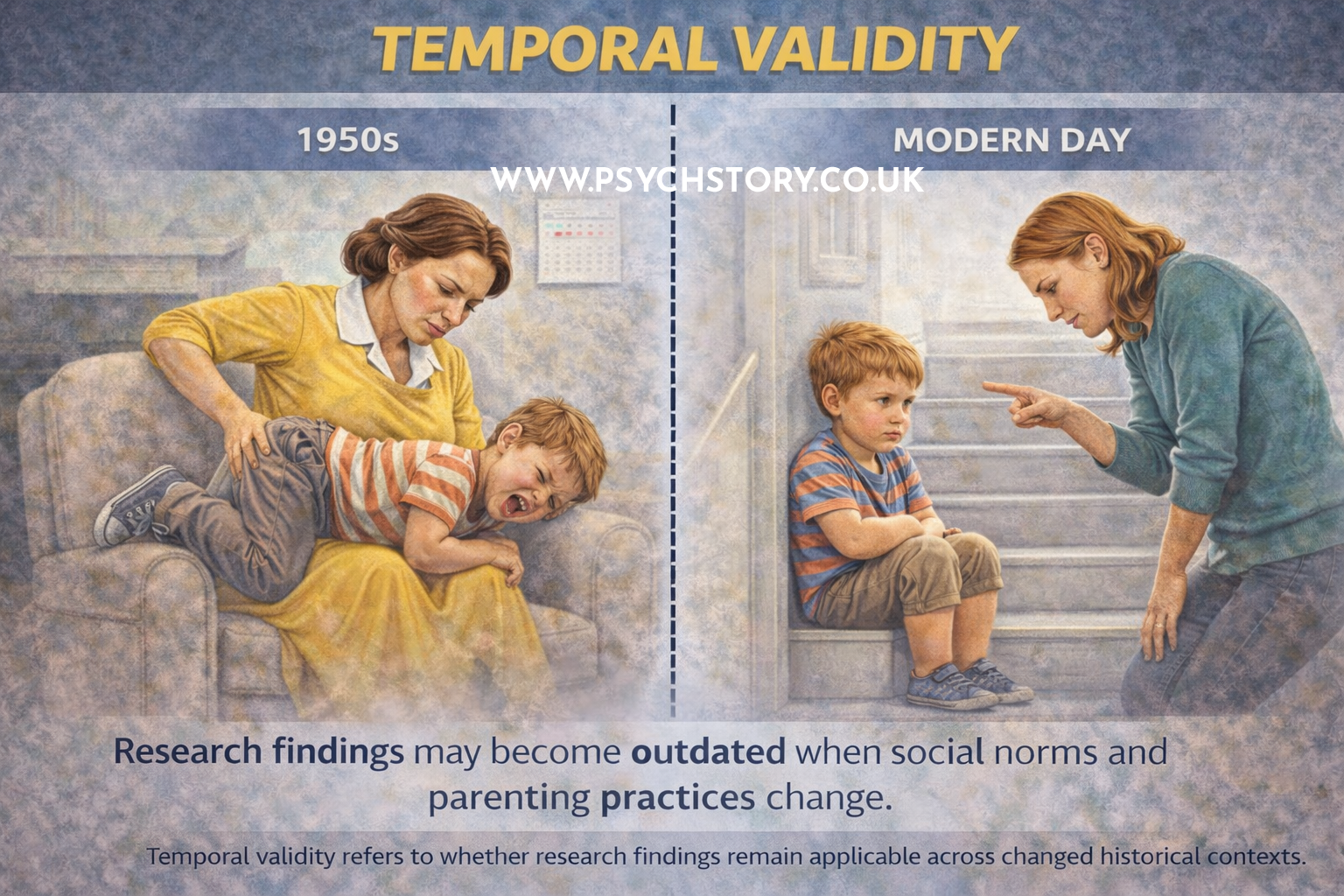

TEMPORAL VALIDITY (HISTORICAL VALIDITY / ZEITGEIST)

Temporal validity asks whether findings endure over time or whether they are specific to the historical period in which the research was conducted.

Studies such as Asch’s conformity research and Milgram’s obedience experiments were conducted in specific social contexts decades ago. Behaviour changes with historical context. Wars and national crises, for example, often increase conformity because societies become more unified. Ainsworth’s attachment research was conducted over forty years ago, when family structures and parenting roles differed from those of many modern families. Temporal validity therefore concerns whether findings still apply under contemporary social conditions.

ASSESSING AND IMPROVING EXTERNAL VALIDITY

External validity cannot be tested directly with a single procedure or statistical test. Unlike reliability or internal validity, it is not checked within the study itself. Instead, external validity refers to the extent to which findings can be generalised beyond the original research context. It is therefore evaluated by asking whether the results still hold when applied to different people, settings or time periods. In other words, external validity is judged by the scope of application of the findings rather than by a specific methodological technique.

Because generalisation cannot be demonstrated within a single study, external validity is assessed by examining whether similar outcomes occur when research is conducted under different conditions. If findings remain consistent across different populations, environments or historical contexts, confidence increases that the results reflect broader psychological processes rather than features unique to one particular study. External validity is improved when researchers avoid overly narrow samples, consider real-world applicability when designing tasks, and examine whether conclusions remain meaningful outside the original laboratory situation. If results only occur under one specific set of conditions, external validity is limited, regardless of how well controlled the original study may have been

INTERNAL VALIDITY

Internal validity refers to whether a research study is accurate and sound within the experiment itself. It asks whether the study genuinely measures what it is supposed to measure and whether the results are truly caused by the independent variable rather than by mistakes, bias or uncontrolled factors.

In simple working terms, internal validity concerns anything inside a research study that can be “cocked up” and lead researchers to measure the wrong thing.

Psychologists design studies to test specific ideas, but many aspects of research can undermine validity. The procedures, tests, questionnaires, interviews, observations and measurement techniques must all accurately capture the intended variable. If these methods measure something other than what they claim to measure, the findings become invalid.

Internal validity, therefore, asks two core questions:

Are the procedures valid within the experiment?

Can any observed effect genuinely be attributed to the manipulation of the independent variable rather than another uncontrolled influence?

A study has high internal validity when the research design is sound, measurements are accurate, and alternative explanations for the results have been minimised

EXAMPLES OF POOR INTERNAL VALIDITY

Below are additional examples of internal validity problems, structured in the same analytical style as your attachment example.

INTERNAL VALIDITY OF ATTACHMENT CLASSIFICATIONS

Validity is an issue because the Strange Situation assumes that infant behaviour has the same meaning across situations and cultures, whereas the same behaviour can be interpreted differently.

Insecure resistant (ambivalent) attachment is classified when infants show high distress during separation and are difficult to console on reunion. However, this behaviour is poorly operationalised because it is unclear what causes it. The distress may result from inconsistent caregiving, in which the child cannot predict the caregiver’s response, or from overprotective caregiving, in which constant parental proximity prevents the child from developing independence. The Strange Situation cannot distinguish between these explanations, meaning the classification describes behaviour but does not clearly identify its psychological cause. If researchers cannot tell what produces the behaviour, the measure lacks validity.

EXTERNAL VALIDITY OF ATTACHMENT CLASSIFICATIONS

Cultural findings further demonstrate the problem. In Japan, infants in Takahashi’s study showed extreme distress during separation. Within the Strange Situation framework, this was interpreted as a resistant attachment pattern. However, Japanese infants typically experience continuous close contact with caregivers through co-sleeping and constant proximity. Separation is therefore unusually stressful, so the behaviour reflects normal cultural experience rather than insecurity. The same behaviour is interpreted as insecure in Western contexts but normal in Japanese contexts, raising doubts about whether attachment insecurity is actually being measured.

A similar issue occurs with avoidant attachment in Germany. German parenting encourages independence and emotional self-reliance. German infants often show low proximity seeking and reduced reunion contact, leading to classification as insecure avoidant under Western criteria. Yet within German culture, this behaviour is considered desirable independence, not insecurity. Again, the same behaviour is interpreted as insecurity in American samples but as normal development in German contexts.

The validity problem, therefore, arises because attachment classifications may reflect cultural expectations or different caregiving styles rather than true attachment security. If identical behaviours receive different meanings depending on cultural assumptions, it becomes unclear whether the Strange Situation is measuring attachment itself or culturally specific interpretations of child behaviour

VALIDITY OF SCHIZOPHRENIA DIAGNOSIS

One major issue affecting the internal validity of schizophrenia research is that the definition of schizophrenia has changed repeatedly across diagnostic manuals. Symptoms once considered central, such as subtypes (paranoid, catatonic, disorganised), have been removed or redefined over time. This raises a fundamental validity problem: if the definition changes, are researchers studying the same disorder across studies?

For example, a patient diagnosed with schizophrenia under DSM III criteria might not meet DSM 5 criteria. This means research findings across decades may not be directly comparable because the construct itself has shifted. The variable being measured is unstable, weakening internal validity because researchers cannot be certain they are investigating a single, consistent condition.

Furthermore, schizophrenia shares symptoms with other disorders, such as bipolar disorder or severe depression with psychotic features. If diagnostic boundaries overlap, studies claiming to measure schizophrenia may actually include heterogeneous groups with different underlying causes. This questions whether observed results truly relate to schizophrenia or to mixed psychopathology.

VALIDITY OF DEPRESSION MEASUREMENT

Depression is commonly measured using self-report inventories such as the Beck Depression Inventory. However, these scales rely on subjective interpretation of questions. Two individuals may endorse identical items for entirely different reasons. One person may report sleep disturbance due to depression, while another experiences insomnia because of work stress or physical illness.

If a depression scale captures general distress rather than clinical depression specifically, the study lacks internal validity. The research may claim to measure depression, but it is actually measuring negative mood more broadly.

VALIDITY OF OBEDIENCE IN MILGRAM’S STUDY

Milgram’s obedience experiments raise questions about the internal validity of the behaviour actually measured. Participants appeared to obey authority figures, but critics argue that some participants may have guessed the shocks were fake or believed no real harm was occurring.

If participants were complying with perceived experimental expectations rather than genuinely obeying authority in the face of moral conflict, the study may be measuring demand characteristics rather than obedience itself. The independent variable would not be producing the behaviour claimed, reducing internal validity.

VALIDITY OF MEMORY RESEARCH USING WORD LISTS

Laboratory studies of memory often use artificial word lists to study forgetting or recall. While researchers claim to measure memory processes, participants may instead use rehearsal strategies or guessing techniques specific to laboratory tasks.

If performance reflects task strategy rather than natural memory functioning, conclusions about human memory may lack internal validity because the experiment measures performance under artificial constraints rather than memory itself.

EXAMPLE: THE COMPUTER LEARNING STUDY

Internal validity is threatened because alternative explanations exist for performance differences. The computer group may try harder because they feel special (Hawthorne effect). The non-computer group may become competitive or demoralised. Teachers may compensate by offering extra help, parents may complain, and students may share information outside school. Any improvement could therefore result from motivation, expectations or social interaction rather than the teaching method itself. The study cannot confidently claim that the independent variable caused the result

OTHER THREATS TO INTERNAL VALIDITY

QUESTIONNAIRES AND TESTS THAT DO NOT MEASURE WHAT THEY CLAIM

Internal validity is threatened when a test measures the wrong construct. For example, an IQ item asks which is the odd one out: violin, cello, guitar, viola, drums. One participant selects drums because it is percussion, while another selects viola because they have never heard the word. Both responses involve reasoning, yet only one is scored correctly. Performance may therefore reflect vocabulary or cultural exposure rather than intelligence. The study claims to measure intelligence, but is partly measuring prior knowledge, reducing internal validity.

SOCIAL DESIRABILITY BIAS, EXPERIMENTER BIAS AND DEMAND CHARACTERISTICS

Internal validity is threatened because participants’ behaviour reflects expectations or impression management rather than genuine responses. The results no longer reflect the psychological variable being studied but instead reflect participants’ attempts to appear acceptable or helpful.

INCONSISTENT TESTING TIMES

Testing participants at different times of day introduces biological differences in alertness and fatigue. Performance changes may be driven by circadian rhythms rather than the independent variable, leading the study to measure time effects rather than the intended factor.

DIFFERENT TESTING ENVIRONMENTS

Changes in temperature, comfort, noise, or the setting's attractiveness affect concentration and motivation. If groups perform differently because one environment is more comfortable, the study measures environmental influence rather than the experimental manipulation.

FAILURE TO RANDOMLY ALLOCATE PARTICIPANTS

Without random allocation, groups may differ before the experiment begins (ability, motivation, personality). Any outcome differences may therefore result from pre-existing characteristics rather than the independent variable, weakening internal validity.

FAILURE TO COUNTERBALANCE

In repeated-measures designs, participants may improve with practice or worsen with fatigue. Performance changes are then caused by order effects instead of the experimental condition, meaning the study does not measure the intended variable.

NON UNIFORM STIMULI OR MATERIALS

Internal validity is threatened when stimuli are not standardised. For example, participants are asked to rate facial attractiveness, but some photographs show full-body images, others show only faces, some people are smiling while others are not, some are side profiles, some are distant shots, and image quality varies. Participants are no longer judging facial attractiveness alone. Instead, ratings may be influenced by body shape, friendliness conveyed by smiling, camera angle or photo quality. The study claims to measure facial attractiveness, but actually measures multiple uncontrolled factors, reducing internal validity.

INCONSISTENT TASK INSTRUCTIONS OR TASK DEMANDS

Internal validity is threatened when participants are not given identical instructions or equivalent tasks. For example, if one group is told to “work as quickly as possible” while another is told to “work carefully and accurately,” performance differences may reflect interpretation of instructions rather than ability or the independent variable. The study then measures differences in task understanding or motivation rather than the psychological construct under investigation.

POORLY DESIGNED RATING SCALES

Internal validity is threatened when the structure of a scale influences responses rather than participants’ true opinions. For example, using a rating scale with an odd number of options (such as 1 to 5) provides a middle value. Participants may select this central option to avoid making a judgement, appear neutral, or complete the task quickly. As a result, responses may reflect indecision or response bias rather than genuine attitudes. The study therefore measures tendency to choose the middle option instead of the psychological variable being investigated, reducing internal validity

ASSESSING AND IMPROVING INTERNAL VALIDITY

CONTENT VALIDITY

Refers to whether a test fully covers the area it claims to measure. The content should represent all relevant aspects of the construct rather than only a narrow section. Content validity is usually assessed by experts who systematically examine the items and judge whether they adequately reflect established knowledge and agreed standards within the field.

CONCURRENT VALIDITY

Assesses validity by comparing scores from a new measure with scores from an already established and accepted test taken at the same time. If both measures produce similar results, the new test is considered valid. For example, scores on a new intelligence test may correlate with those on an existing IQ test. A strong positive correlation supports validity, whereas a weak correlation suggests the new test may not measure the intended construct.

PREDICTIVE VALIDITY

Refers to whether a measure can successfully predict future outcomes related to the construct. For example, an intelligence test taken at age three may be evaluated by examining whether it predicts later academic achievement. Similarly, a clinical diagnosis may be assessed by whether it predicts recovery patterns or long-term functioning.

TEMPORAL VALIDITY

Concerns whether research findings remain accurate over time or whether they are specific to a particular historical period or cultural climate (Zeitgeist). A study has temporal validity if its conclusions still apply when repeated in a different era.

FACE VALIDITY

Refers to whether a measure appears, on the surface, to assess what it claims to measure. The items should look relevant and appropriate to participants and experts. For example, a depression questionnaire should include questions about mood, sleep or motivation rather than unrelated topics such as food preferences. Although face validity is subjective, it is often the first indication that a measure is appropriate.

CRITERION VALIDITY

Assesses validity by comparing a measure with an external criterion known to reflect the construct. For instance, IQ scores may be compared with academic performance. Criterion validity includes concurrent validity (comparison at the same time) and predictive validity (comparison with future outcomes).

CONSTRUCT VALIDITY

Refers to whether a test genuinely measures the theoretical construct it is intended to measure. Researchers examine whether results align with theoretical expectations about the construct. For example, if intelligence is understood as involving multiple cognitive abilities, an intelligence test should assess a range of skills rather than a single narrow ability. Construct validity, therefore, evaluates how well a measure reflects the underlying psychological theory

OTHER METHODS FOR IMPROVING VALIDITY

OTHER METHODS FOR IMPROVING VALIDITY

STANDARDISED INSTRUCTIONS AND PROCEDURES

All participants should receive identical instructions and experience the same procedures so that differences in results are caused by the independent variable rather than variations in how the study is conducted.

CONTROL OF EXTRANEOUS VARIABLES

Researchers must identify and control variables that could unintentionally influence behaviour. Removing alternative influences increases confidence that the independent variable caused the outcome.

REDUCING DEMAND CHARACTERISTICS

Participants may guess the study's aim and alter their behaviour accordingly, leading to non-genuine responses. Validity can be improved through placebo conditions, single-blind procedures where appropriate, and by avoiding disclosure of the hypothesis by using general or prior consent rather than revealing specific aims.

REDUCING THE HAWTHORNE EFFECT

Participants sometimes perform differently simply because they know they are being studied. Minimising researcher attention, using naturalistic settings where possible, or disguising the true purpose of the research can help ensure behaviour remains genuine.

REDUCING SOCIAL DESIRABILITY BIAS

Participants may respond in ways that present themselves in a positive light rather than truthfully. Validity improves when anonymity or confidential response methods are used, such as anonymous questionnaires or private response systems.

CONTROLLING INVESTIGATOR EFFECTS

Participants may react to researcher characteristics such as status, attractiveness, age or manner. Matching researchers to participants where possible and training investigators to remain neutral reduces unintended influence on behaviour.

REDUCING INVESTIGATOR BIAS

Researchers may unintentionally signal expected outcomes. Single-blind or double-blind procedures prevent investigators from influencing participants or from interpreting behaviour in line with expectations.

REDUCING OBSERVER BIAS

Observers may interpret behaviour subjectively. Clearly operationalised behavioural definitions ensure observations are objective and consistently applied.

CONTROLLING INDIVIDUAL DIFFERENCES

Participants naturally differ in ability, personality and experience. Matched-pairs designs help ensure that groups are comparable, thereby improving validity.

MINIMISING CONSTANT ERRORS

Constant errors affect all participants equally and systematically distort results. For example, if all participants are unwell during one condition, performance differences may reflect illness rather than the experimental manipulation. Careful control of testing conditions reduces this risk.

LIMITING RANDOM ERRORS

Random errors affect only some participants at unpredictable times. Using sufficiently large samples helps ensure these effects balance out and do not distort overall findings.

REDUCING PARTICIPANT ALLOCATION BIAS

Researchers choosing who enters each condition can unintentionally create group differences. Random allocation ensures groups are equivalent at the start of the study.

CONTROLLING ORDER EFFECTS

In repeated-measures designs, participants may improve with practice or decline due to fatigue. Counterbalancing the order of conditions ensures performance changes are not mistaken for experimental effects